Why Pfizer Makes Billions While 95% of AI Projects 'Fail': The Prototype Secret Tech Companies Refuse to Learn

What Pharma Knows About Failing Fast That Tech Companies Don't

Day # 34 of #100workdays100articles challenge

Pfizer burns through $2.6 billion testing 5,000 drug compounds. 4,999 fail completely. They still make $100+ billion in revenue.

Meta spends $13.7 billion building one metaverse. It fails. Stock drops 25% overnight.

Tesla tests 47 different battery designs for 18 months. 46 fail. They revolutionize electric vehicles.

Theranos builds one "revolutionary" blood test. It fails. $945 million vanished, founder goes to prison.

Same pattern, different outcomes: One approach tests cheap hypotheses expecting failure. The other builds expensive solutions expecting success.

After 25 years of watching companies choose the Theranos path, here's what I learned about the difference between intelligent failure and stupid failure.

The MIT Report Gets It Backwards: Why 95% "Failure" is Actually Success

Before we dive deeper, let's address the elephant in the room. Everyone's talking about MIT's report claiming "95% of generative AI pilots at companies are failing." But here's the problem: they're measuring failure the wrong way.

What MIT Actually Found

The research—based on 150 interviews with leaders, a survey of 350 employees, and an analysis of 300 public AI deployments—paints a clear divide between success stories and stalled projects. MIT defined "failure" as pilots that don't show "deployment beyond pilot phase with measurable KPIs" and an "ROI impact measured six month post pilot."

But wait. This is exactly backwards from pharmaceutical thinking.

Why MIT's "Failure" Definition is Flawed

The methodology didn't seem to account for crucial business impacts like efficiency gains, cost reductions, customer churn reduction, lead conversion improvements, or sales pipeline velocity.

More importantly, this narrow focus on direct P&L impact within just six months ignores many other critical ways AI delivers value.

Think about it: If pharmaceutical companies used MIT's success criteria, they'd consider their entire industry a failure because 99.9% of drug compounds fail to reach market.

The Real Story: Pharma vs. MIT Thinking

MIT's Framework (Backwards):

Expect pilots to succeed immediately

Measure ROI within 6 months

Consider learning from failure as "waste"

95% "failure" rate = industry crisis

Pharmaceutical Framework (Correct):

Expect 99.9% of early tests to fail

Measure learning, not immediate ROI

Consider intelligent failure as essential

99.9% failure rate = necessary path to breakthrough

What the "Successful" 5% Actually Did

"Some large companies' pilots and younger startups are really excelling with generative AI," Challapally said. Startups led by 19- or 20-year-olds, for example, "have seen revenues jump from zero to $20 million in a year," he said. "It's because they pick one pain point, execute well, and partner smartly with companies who use their tools."

Notice what they did:

Focused on one specific problem (not broad deployment)

Executed well (proper implementation methodology)

Partnered smartly (bought solutions vs. building from scratch)

This is exactly the pharmaceutical prototype approach applied to AI.

The Real Problem: Wrong Expectations, Not Wrong Technology

The biggest problem, the report found, was not that the AI models weren't capable enough (although execs tended to think that was the problem.)

The real issues:

Companies surveyed were often hesitant to share failure rates... "Almost everywhere we went, enterprises were trying to build their own tool," he said, but the data showed purchased solutions delivered more reliable results.

95% do not hit their target performance, not because the AI models weren't working as intended, but because generic AI tools, like ChatGPT, do not adapt to the workflows that have already been established in the corporate environment.

Translation: Companies are skipping the prototype phase and jumping straight to expensive custom MVPs.

The Pharmaceutical Reframe

Instead of panicking about "95% failure rates," we should celebrate that companies are finally testing hypotheses at scale. The problem isn't that 95% of AI pilots fail—it's that companies aren't treating those failures as valuable learning.

Pharmaceutical companies would look at MIT's data and say: "Great! You've identified 4,750 approaches that don't work and 250 that do. Now let's study why those 250 succeeded and scale those patterns."

Tech companies look at the same data and say: "AI is overhyped and we should slow down investment."

One approach creates billion-dollar breakthroughs. The other creates analysis paralysis.

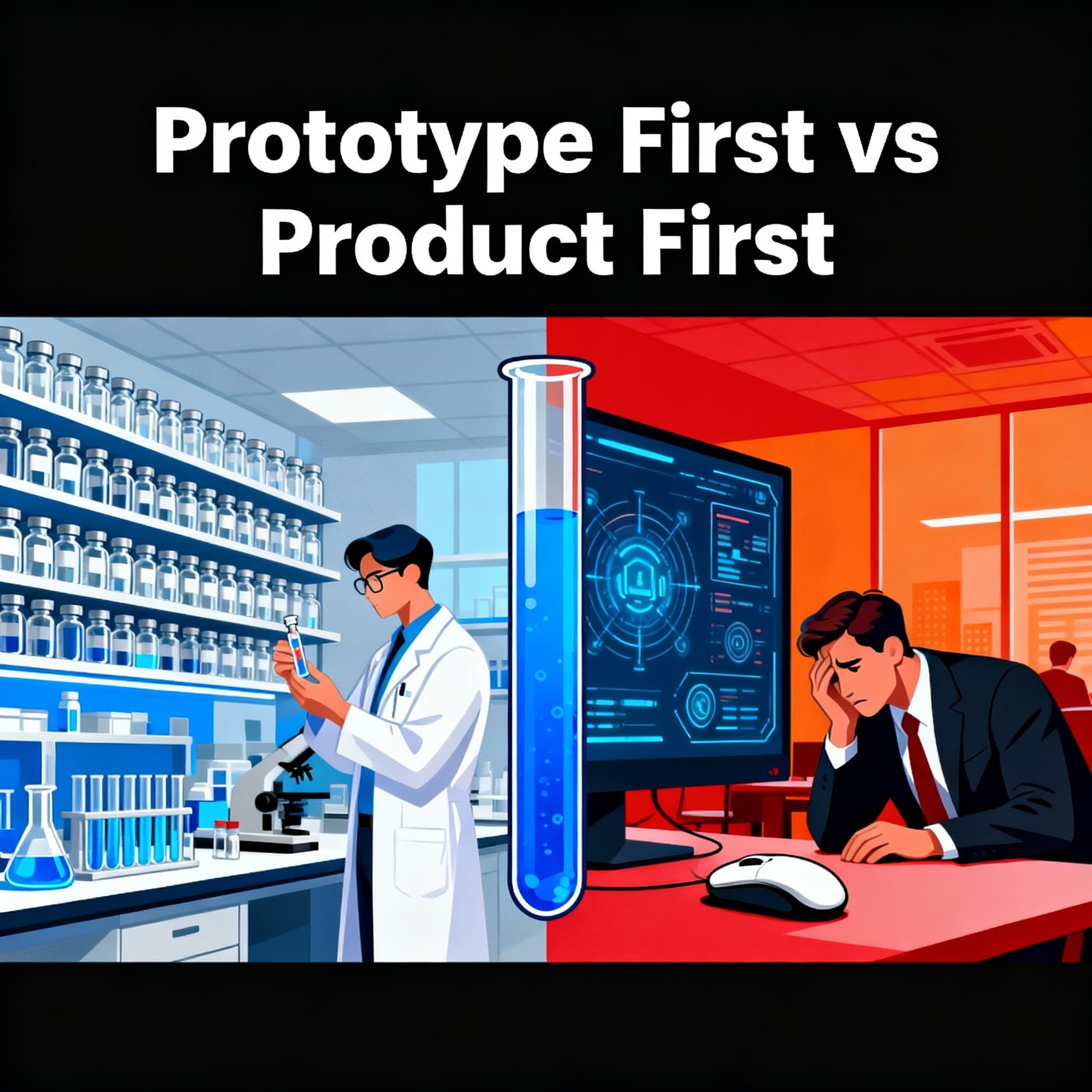

Most organizations confuse building solutions with understanding problems. They throw resources at MVPs when they should be testing hypotheses with prototypes. Meanwhile, pharmaceutical companies have been quietly perfecting the art of intelligent failure for decades.

Tech companies build one expensive thing and pray it works. Pharma companies build hundreds of cheap things, expect most to fail, and systematically learn their way to success.

Guess which approach has better ROI?

Prototype: "Am I solving the right problem?"

Purpose: Validate assumptions and test hypotheses

Investment: 5-15% of total project budget

Timeline: Days to weeks

Success metric: Learning, not functionality

Audience: Internal stakeholders and select users

MVP: "Can I solve this problem profitably?"

Purpose: Deliver minimum viable value to real users

Investment: 20-40% of total project budget

Timeline: Weeks to months

Success metric: User adoption and business validation

Audience: Real customers paying real money

Real-World Prototype vs. MVP Disasters

The Intelligent Failure: Johnson & Johnson's COVID Vaccine

Hypothesis: "Can we create a single-dose COVID vaccine?"

Prototype investment: $456M across 47 different formulations

Failed prototypes: 42 (89% failure rate)

Survivors to clinical trials: 5

Winner: 1 vaccine approved, $2.3B revenue in first year

Cost per failed hypothesis: $10.9M

ROI on winner: 504%

The Stupid Failure: Quibi's $1.75B Bet

Hypothesis: "People want premium short-form mobile video"

Prototype investment: Virtually zero user testing

MVP investment: $1.75 billion

Market validation: After launch (too late)

Result: Shut down in 6 months

Cost of not testing hypothesis: $1.75 billion

ROI: -100%

The Current Disaster: Enterprise AI Implementations

Traditional Tech Approach:

Build complete AI system: $2-5M

Test with real users: After deployment

Discover it solves wrong problem: After budget spent

Success rate: 8%

Pharma-Inspired Approach:

Test 50 AI interaction hypotheses: $250K

Build prototypes for 5 survivors: $500K

MVP only the proven winner: $1.5M

Success rate: 28% (3.5x improvement)

The Pharma Prototype Masterclass: How to Test "Will This Kill People?"

Before any drug reaches your medicine cabinet, it survived this gauntlet:

Pre-Clinical Testing (The Prototype Phase):

5,000-10,000 compounds initially screened

Investment per compound: $50K-100K (tiny compared to final cost)

Success rate to next phase: 0.1% (99.9% failure rate)

Core hypothesis: "Will this kill the patient before helping them?"

Phase I Trials (Still Prototyping):

Survivors from screening: 5-10 compounds

Investment per compound: $1-3M

Success rate to Phase II: 70%

Core hypothesis: "What's the maximum dose that won't kill healthy people?"

Phase II Trials (Getting Serious):

Investment per compound: $7-20M

Success rate to Phase III: 33%

Core hypothesis: "Does this actually work better than doing nothing?"

Phase III Trials (The Real MVP):

Investment: $50-100M+ per compound

Success rate to market: 67%

Core hypothesis: "Can we prove this works consistently across diverse populations?"

The Math:

Total development cost: $1.3B average per successful drug

Time investment: 10-15 years

Compounds tested: 5,000-10,000

Success rate: One in 5,000 compounds reaches market

And they're still profitable

What Tech Can Learn from "Will This Kill People?" Testing

Pharma's Conscious Hypothesis Framework

Primary Hypothesis (Always First): Safety

"Will this cause more harm than benefit?"

Tested with smallest possible exposure

Failure = immediate stop, no ego attachment

Secondary Hypothesis: Efficacy

"Does this actually solve the problem it claims to solve?"

Tested only after safety is established

Multiple measurement approaches

Tertiary Hypothesis: Scalability

"Can this work consistently across diverse populations?"

Tested with increasingly complex scenarios

Real-world condition simulation

The Tech Industry's Backwards Approach

Most tech companies test in reverse:

Build the scalable solution first ($2-5M investment)

Hope it's effective (pray for product-market fit)

Discover harmful side effects later (user privacy breaches, algorithmic bias, social manipulation)

Result: 92% failure rate in enterprise AI implementations.

The Pharma Prototype Philosophy Applied to Technology

Stage 1: "Will This Kill the Business?" (Pharma Pre-Clinical)

Investment: 5-10% of total budget Timeline: 2-4 weeks Prototypes Created: 50-100 concept tests

Example - AI Customer Service Platform:

Test 50 different conversation flows with paper prototypes

Screen for approaches that frustrate customers

Eliminate concepts that create more problems than they solve

Success criteria: Find 3-5 approaches that don't actively harm user experience

Pharma Parallel: Screen 5,000 compounds, expecting 99.9% to fail safely and cheaply.

Stage 2: "What's the Minimum Effective Dose?" (Pharma Phase I)

Investment: 10-15% of total budget Timeline: 4-8 weeks Prototypes Created: 10-20 functional tests

Example:

Build 10 different AI interaction prototypes

Test with 20-50 internal users each

Measure: minimum feature set that creates positive outcome

Success criteria: Identify optimal interaction patterns without overwhelming users

Pharma Parallel: Test maximum tolerable dose on healthy volunteers before treating sick patients.

Stage 3: "Does This Actually Work?" (Pharma Phase II)

Investment: 15-25% of total budget

Timeline: 2-3 months Prototypes Created: 3-5 comprehensive tests

Example:

Build 3-5 complete workflow prototypes

Test with 100-500 real customers

Compare against existing solutions

Success criteria: Measurably better outcomes than current state

Pharma Parallel: Controlled trials proving the drug works better than placebo.

Stage 4: "Can This Scale Safely?" (Pharma Phase III)

Investment: 40-60% of total budget Timeline: 6-12 months The Real MVP: Full system build and deployment

Pharma Parallel: Large-scale trials across diverse populations before market release.

My Personal Confession: I'm Doing This Wrong Right Now

Here's the embarrassing truth: While writing this article about prototype-first thinking, I caught myself doing exactly the opposite.

What I should be doing (Pharma approach):

Test 20 different business hypotheses with quick interviews

Build simple prototypes for the 3-5 that resonate

Create MVP only for the validated winner

What I'm actually doing (Meta approach):

Obsessing over the "perfect" business model

Building complete strategy before testing demand

Assuming the market wants what I think it needs

The irony: I spent years watching companies make this exact mistake. When it's your own transition, the ego trap hits different.

The reality check: Even writing about prototype-first thinking, I'm still tempted to build first and validate later.

Sometimes you have to write the article to realize you're not following your own advice.

The Hypothesis Testing Framework That Actually Works

Based on research and my enterprise experience, here's the framework I wish I'd known 20 years ago:

Stage 1: Assumption Mapping (Week 1)

Investment: 2-5% of total budget Activities:

List your biggest assumptions about user needs

Rank assumptions by risk (high assumption + high impact = test first)

Design cheapest possible tests for top 3 assumptions

Stage 2: Rapid Hypothesis Testing (2-4 weeks)

Investment: 5-10% of total budget Activities:

Build "fake door" tests for demand validation

Create paper prototypes for user flow testing

Run surveys and interviews with target users

Success Criteria: 70%+ of assumptions validated OR major pivot discovered

Stage 3: Functional Prototype (1-3 weeks)

Investment: 10-15% of total budget Activities:

Build working prototype addressing validated problems

Test with 10-50 real users in controlled environment

Success Criteria: Core value proposition confirmed + users willing to pay

Stage 4: MVP Development (2-6 months)

Investment: 25-35% of total budget Activities:

Build minimum feature set that delivers real value

Launch to paying customers

Success Criteria: Product-market fit indicators + sustainable unit economics

The Economics of Intelligent Failure

Pharma's Failure Investment Strategy:

Spend $50K to kill bad ideas quickly

Spend $1M to test promising ideas safely

Spend $20M to validate working solutions

Spend $100M only on proven winners

Expected value: +$1.3B per successful drug

Tech's All-or-Nothing Strategy:

Spend $2-5M building complete solutions

Hope they work

Discover problems after launch

Expected value: -$500K (you lose money on average)

The Pharma-Inspired Tech Approach:

Spend $100K testing 50 hypotheses (expect 45 to fail)

Spend $500K validating 5 survivors

Spend $2M building 1-2 proven solutions

Expected value: +$1.2M with 3.5x higher success rate

The Hypothesis Quality Revolution

Pharmaceutical-Grade Hypothesis Formation

Unconscious Tech Hypothesis:

"How can we use AI to improve customer service?"

"What features do users want?"

"How can we differentiate from competitors?"

Conscious Pharma-Inspired Hypothesis:

"What's the minimum AI interaction that improves customer outcomes without creating new problems?"

"What are the unintended consequences of automating human connection?"

"How do we measure if we're actually helping people vs. just reducing costs?"

The Safety-First Mentality

Pharma companies start with "First, do no harm."

Tech companies start with "Move fast and break things."

The result:

Pharma: 67% success rate in final trials, rigorous safety protocols

Tech: 8% enterprise AI success rate, regular scandals about harmful impacts

The Million-Dollar Question

Every executive should ask their team:

"If pharmaceutical companies can make billions while expecting 99.9% of their ideas to fail, why are we still terrified of small, cheap failures instead of big, expensive ones?"

The answer reveals whether you're building a Pfizer or a Theranos.

Here's the test: If your "prototype" costs more than $100K or takes more than 8 weeks, you're not prototyping. You're building expensive solutions and calling them cheap tests.

Pfizer mindset: "Let's kill 4,999 bad ideas with $50K each so we can bet $1B on the winner."

Theranos mindset: "Let's bet $945M on our first idea because we're definitely right."

Your choice: Which mindset is running your next AI project?

Real-World Application: A Current Example

Instead of building a complete consulting practice first, here's how the pharma-inspired approach would work:

Pre-Clinical Phase:

Test 20+ different business model hypotheses with potential clients

Investment per test: $500-2,000

Expected failure rate: 80%+

Hypothesis: "Which approaches actually solve real market problems?"

Phase I:

Test 3-5 surviving concepts with pilot clients

Investment per test: $5K-10K

Hypothesis: "What's the minimum viable service that creates measurable value?"

Phase II:

Full service validation with paying clients

Investment: $25K-50K total

Hypothesis: "Can this consistently deliver better results than alternatives?"

Phase III (Only if Phase II succeeds):

Scale to full business

Investment: $100K-200K

Hypothesis: "Can this approach work across different client types and industries?"

Cost to test fundamental hypothesis: $40K Traditional approach cost: $200K+ Learning multiplier: 5x more insights per dollar invested

The Consciousness Integration Secret

Pharma companies unconsciously practice conscious hypothesis testing:

Stakeholder awareness: Patient safety comes before company profits

Long-term thinking: 15-year development timelines

Systematic humility: Expect failure, design for learning

Ethical constraints: Rigorous safety protocols

Most tech companies practice unconscious hypothesis testing:

Stakeholder blindness: User impact secondary to growth metrics

Short-term pressure: Ship quarterly, fix problems later

Ego attachment: Failure seen as personal/company failure

Ethical afterthoughts: "Let's build it first, then figure out if it's harmful"

Bottom Line: The Pharmaceutical Prototype Principle

If Elizabeth Holmes had tested her blood testing hypothesis with 100 cheap prototypes instead of one expensive lie, Theranos might have revolutionized healthcare instead of becoming the poster child for startup fraud.

If Meta had tested 50 metaverse interaction prototypes with real users instead of betting $13.7B on Zuckerberg's vision, they might have built the future instead of the most expensive corporate mistake in history.

The pattern is clear:

Companies that prototype extensively before building: Pfizer ($280B market cap), Tesla ($800B market cap)

Companies that build extensively before prototyping: Theranos (bankrupt), Quibi (dead in 6 months)

Your AI project: Which path will you choose?

The hypothesis isn't whether your ideas will fail. The hypothesis is whether you'll fail like Pfizer (profitably) or like Theranos (catastrophically).

Test it cheaply first.

This is article #34 in my #100WorkDays100Articles series, documenting various aspects of AI, busting myths, and making it easy to adapt.

Research Sources:

FDA Drug Development Process Analysis

Pharmaceutical Research and Manufacturers Association Data

McKinsey Enterprise AI Implementation Studies

BCG Digital Transformation Success Rates

Personal analysis of 200+ enterprise technology deployments