The $40 Billion Vanishing Act: Why 56% of CEOs Say AI Returns Nothing

A tale of two realities: the companies banking AI wins versus the majority drowning in pilot purgatory

Day 43 of #100WorkDays100Articles

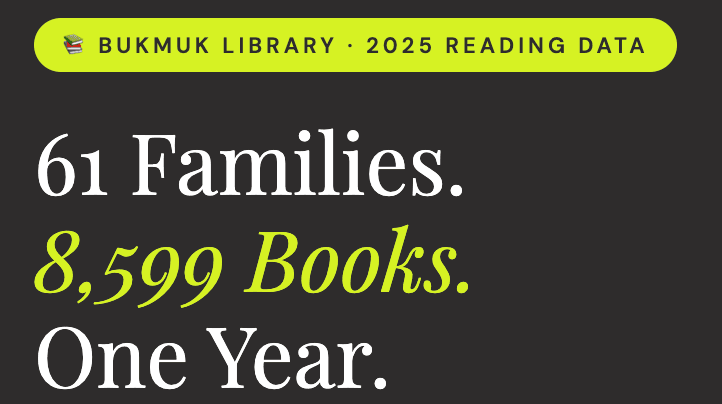

Here's the number that should make every board room go quiet: 56% of CEOs report seeing neither increased revenue nor decreased costs from AI, despite massive investments in the technology. That's from PwC's fresh survey of 4,454 business leaders across 95 countries, and it lands like a brick through the hype window.

Not decreased costs. Not increased revenue. Nothing.

The AI gold rush? For most companies, it's turning up fool's gold. And unlike those feel-good vendor case studies flooding LinkedIn, this data comes from the people signing the checks.

The Divide That's Reshaping Business

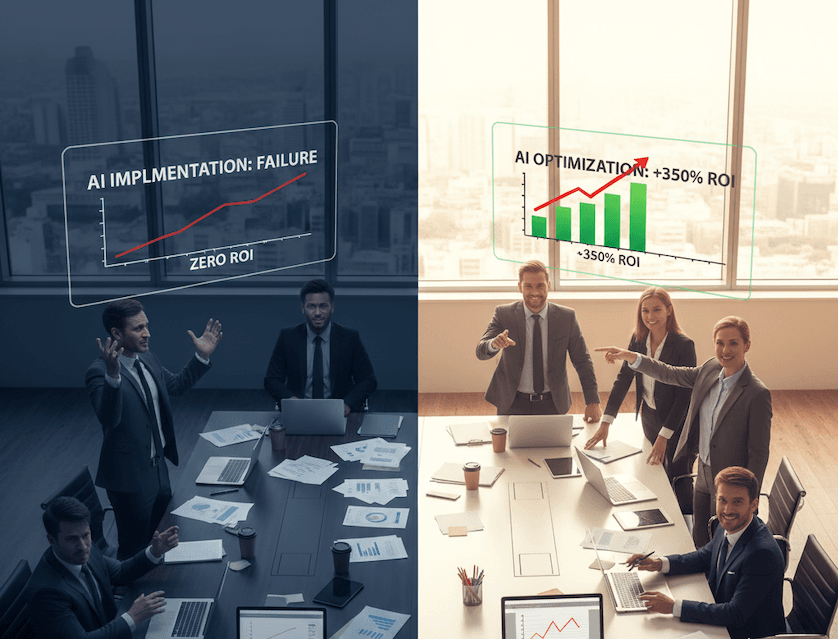

But here's where it gets interesting. While 56% are getting zilch, 12% reported both lower costs and higher revenue. That gap between the winners and losers isn't narrowing. It's becoming a chasm.

CEOs whose organizations have established strong AI foundations are three times more likely to report meaningful financial returns. Three times. This isn't about who bought the shiniest AI tools or who started earliest. This is about who did the boring, unglamorous work of building actual foundations.

The companies winning? They're not running around deploying AI everywhere hoping something sticks. CEOs reporting both cost and revenue gains are two to three times more likely to say they have embedded AI extensively across products and services, demand generation, and strategic decision-making. Notice the word "embedded." Not piloted. Not experimented with. Embedded.

Why Most AI Projects Are Performance Theater

Let's connect this to what MIT discovered last year. 95% of generative AI implementation is falling short, with their research analyzing 300 initiatives across enterprises. The pattern? Purchasing AI tools from specialized vendors and building partnerships succeed about 67% of the time, while internal builds succeed only one-third as often.

Yet what did MIT researchers find companies doing? "Almost everywhere we went, enterprises were trying to build their own tool" even though bought solutions worked twice as well.

Think about that. Two-thirds success rate versus one-third. But pride and "competitive differentiation" rhetoric won over pragmatism. Companies spent millions building what they could've bought for thousands, then acted shocked when their custom AI didn't outperform products built by teams who do nothing but AI.

Here's what happened: Boards approved AI budgets. Executives felt pressure to show "innovation." Internal teams got tasked with building proprietary systems. Nine months later (the enterprise average, per MIT), they had a pilot that kind of worked in controlled conditions but fell apart when real humans tried using it.

Meanwhile, the companies buying specialized tools and getting them working in 90 days? They're already measuring ROI and moving to the next problem.

The Consciousness Gap Nobody's Talking About

PwC's response to their own findings reveals something fascinating and troubling. They conclude that "isolated, tactical AI projects" often don't deliver measurable value, and that tangible returns instead come from enterprise-wide deployments consistent with business strategy.

Wait. Let me get this straight. Your pilot failed, so the answer is... deploy it everywhere anyway?

PwC advises not worrying if an AI pilot project fails, and pushing ahead with a large-scale deployment anyway, provided you have "strong AI foundations" including the right technology environment, a clear roadmap, formalized risk processes, and "an organizational culture that enables AI adoption."

Translation: If it didn't work, you just didn't believe hard enough.

This is precisely backward. It reveals a fundamental misunderstanding of what makes AI work. It's not about faith or enterprise-wide deployment. It's about consciousness of purpose.

What the 12% Actually Do Differently

The companies getting returns didn't skip pilots. They ran pilots that actually tested something meaningful. Not "can we get this AI to write emails" but "can this AI reduce our contract review time by 40% while maintaining accuracy?"

They didn't deploy everywhere. They identified the three places where AI would matter most and went deep there. Companies applying AI widely to products, services, and customer experiences achieved nearly four percentage points higher profit margins than those that did not. Notice "widely to products" not "scattered across 47 different internal experiments."

They put foundations first. Not the sexy stuff. The boring infrastructure work. Data pipelines. Integration points. Training programs. Governance frameworks. CEOs whose organizations have established strong AI foundations—such as Responsible AI frameworks and technology environments that enable enterprise-wide integration—are three times more likely to report meaningful financial returns.

And here's what really matters: They empowered the people doing the actual work. Empowering line managers—not just central AI labs—to drive adoption turned out to be essential. The front-line manager who sees the broken process daily knows exactly where AI will help. The central "innovation lab" three floors up? They're guessing.

The Cost of Getting This Wrong

CEO confidence just hit a five-year low. Only 30% of CEOs say they are confident about revenue growth over the next 12 months—down from 38% in 2025 and 56% in 2022. That's not just about AI. It's about geopolitics, cyber threats, tariffs, and the general sense that the ground keeps shifting.

But AI amplifies everything. Get it right, and you've got a competitive advantage that compounds. Get it wrong, and you're burning capital while competitors pull ahead. CEO confidence in the global economy remains positive, yet only 30% have confidence that they can grow their own businesses, creating a paradox that Mohamed Kande, PwC's global chairman, calls "one of the most testing moments for leaders."

The window's closing. Companies are moving from asking whether they should adopt AI to "nobody is asking that question anymore. Everybody's going for it". But going for it without consciousness of purpose, without actual strategy, without proper foundations? That's how you join the 56% getting nothing.

What Conscious Implementation Actually Looks Like

Real AI success starts with a question: What human capability are we actually trying to enhance?

Not "what can we automate" but "what human judgment can we amplify." The companies winning aren't replacing people. They're giving people superpowers. Pairing human and AI boosts productivity by 30-45%, but that means the human stays central to the process.

Pick one real problem. Not ten. One problem that actually hurts your business. Organizations should focus on realistic, unsexy quick wins: one high-volume, low-risk process for a focused pilot with clear success metrics. Not the headline-grabbing stuff. The process that's costing you money or time every single day.

Buy, don't build. Unless you're an AI company, your competitive advantage isn't in custom AI models. It's in using AI better than your competitors use it. Purchased solutions succeed 67% of the time versus 33% for internal builds. The math's pretty simple.

Measure what matters. Not "AI adoption rates" or "number of AI tools deployed." Measure actual business outcomes. Customer retention. Deal closure rates. Time to resolution. Cost per transaction. The numbers that already appear on your financial statements.

And here's the part most companies skip: Design for human agency. If your AI makes your people feel less capable, less trusted, or less valuable, it will fail. Not because of the technology but because the people won't use it. Only 14% of workers use generative AI daily, and that gap between investment and adoption tells you everything about whose experience you built for.

The Real Question

We're at an inflection point. 2026 is shaping up as a decisive year for AI. The companies that figure out conscious implementation—that balance ambition with pragmatism, that build foundations before buildings—will pull so far ahead that catching up becomes impossible.

The others? They'll keep running pilots that teach them nothing, deploying tools nobody uses, and wondering why their $40 billion in AI investments returned zero.

You can't fix this by spending more money. You can't fix it by deploying faster. You fix it by asking different questions. By putting human agency at the center. By building boring infrastructure instead of sexy demos. By measuring business outcomes instead of AI metrics.

The gap between the winning 12% and the losing 56% isn't technology. It's consciousness. The question isn't whether your company uses AI. It's whether your company understands what AI is actually for.

And judging by PwC's numbers, most don't.

The companies getting AI right aren't the ones talking loudest about AI. They're the ones quietly embedding it into processes that matter, measuring outcomes that count, and keeping humans at the center of the equation. That's not sexy. But it's the difference between returning zero and returning millions.

Abhinav Girotra is a conscious AI evangelist and doctoral candidate at Golden Gate University. After 25 years in Fortune 500 corporate IT, he now advocates for AI systems that enhance rather than replace human agency. Connect with him on LinkedIn or subscribe to TheSoulTech.com for insights on conscious AI implementation.