Workslop: The $186/Month AI Tax Nobody's Talking About

How unconscious AI adoption is costing enterprises $186 per employee monthly destroying workplace trust faster than productivity metrics can measure

Bottom line: Your employees are drowning in AI-generated garbage. It's costing you $186 per employee per month, destroying workplace trust, and proving that unconscious AI adoption is worse than no AI at all.

Day # 35 of #100workdays100articles challenge

Last Tuesday morning, a product manager at a Fortune 500 tech company opened what appeared to be a comprehensive competitive analysis.

Beautiful formatting. Professional language. Impressive citations. The kind of document that screams, "I spent hours on this."

Complete rubbish.

Two hours of fact-checking later, plus three conference calls to figure out what the sender actually meant, the team realized they'd wasted their entire morning cleaning up what Stanford researchers now call "workslop."

And this is happening to 40% of American workers every single month.

The tools promising to save us time are stealing it in ways our productivity metrics can't measure. Welcome to the AI productivity paradox.

Workslop: when your inbox becomes a landfill

Think of it as serving someone a beautifully plated dish of plastic food. Looks nourishing. Smells professional. Try to digest it? You'll starve.

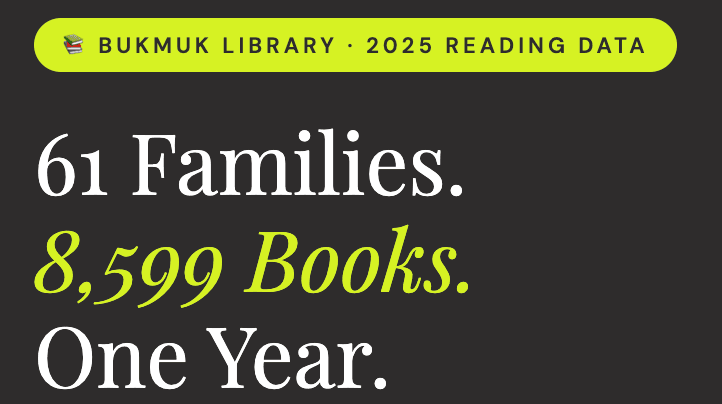

Stanford's Social Media Lab and BetterUp Labs found that 40% of US workers reported receiving AI-generated material in the last month that contained very little in the way of actionable facts and figures. Content that someone else then needed to sort out and turn into something useful.

But wasted time isn't the real damage.

Over half of the recipients felt annoyed. Over a third were confused. Nearly a quarter felt offended.

Worse: 42% said they trusted the sender less after receiving AI garbage. Over a third decided the sender was less creative and intelligent than they originally thought.

We're not just killing productivity. We're nuking workplace trust at an industrial scale.

The math that should terrify every CFO

$186 per employee per month in lost productivity. That's what it costs to sort AI hallucinations from actual facts.

Ironically, just a few dollars less than a ChatGPT Pro subscription.

For a company with 1,000 employees at that 40% rate:

400 employees receive a workslop monthly

$186 per affected employee in lost productivity

$74,400 in monthly losses

$892,800 annually

Before we factor in destroyed trust, damaged reputations, and the mental exhaustion of wondering whether every document you receive is useful or just AI-flavored word salad.

One finance manager told researchers, "It created a situation where I had to decide whether I would rewrite it myself, make him rewrite it, or just call it good enough. It is furthering the agenda of creating a mentally lazy, slow-thinking society that will become wholly dependent upon outside forces."

That's not productivity. That's organizational decay in business casual.

I've been screaming about this for months

Remember when I said unconscious AI adoption would backfire? When I warned that throwing AI at problems without frameworks would create more chaos than clarity?

The data just arrived.

Despite $30-40 billion in enterprise investment into generative AI, 95% of organizations see zero measurable ROI. MIT found this. Ninety-five percent.

The UK government found no productivity improvement from Microsoft 365 Copilot in the Department for Business and Trade.

This isn't an AI failure. It's a consciousness failure.

Why unconscious AI creates workslop

AI doesn't create workslop. Unconscious people using AI create workslop.

Watch the pattern:

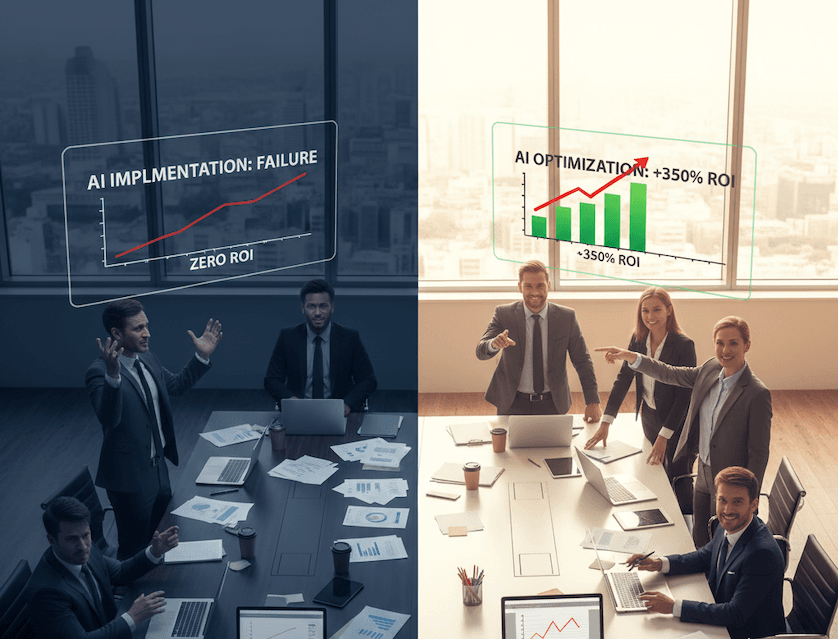

Unconscious approach: Employee thinks "I need to look productive" → dumps vague prompt into ChatGPT → gets generic impressive-looking content → sends it without verification because it "looks good enough" → recipient spends 2 hours decoding garbage.

Conscious approach: Employee thinks "I need to solve a specific problem" → uses AI as a thinking partner with clear parameters → gets a structured draft → adds human expertise and verification before sharing → recipient gets useful, actionable content.

The difference? Sacred intention.

One of my CONSCIOUSNESS Framework pillars—Mindful Foundation—exists to prevent exactly this disaster. Before any AI implementation, we ask:

What is our sacred intention? (Not "to appear productive" but "to serve stakeholders")

Who will this impact? (Recipients deserve truth, not garbage)

What unconscious biases might we perpetuate? (Laziness disguised as efficiency)

Organizations skipping this step are the ones generating workslop.

The trust apocalypse nobody's measuring

We can measure the $186 monthly tax. We can't measure decaying organizational trust.

When 42% of employees lose trust in colleagues who send AI garbage, what's the long-term cost?

How many strategic initiatives fail because teams don't trust each other's analysis? How many innovations die because people assume "it's probably just AI-generated nonsense"? How many high performers leave because they're drowning in digital diarrhea?

One tech boss told researchers it took "an hour or two just to congregate everybody and repeat the information in a clear and concise way" after receiving confusing AI content.

That's not lost productivity. That's organizational scar tissue forming in real-time.

Leadership is part of the problem

Plot twist that should make every C-suite executive uncomfortable:

The survey found 18% of workslop flows from employees to managers. But 16% comes from managers themselves.

Leadership isn't immune to this disease. They're carriers.

And with companies insisting staff rely more on AI—or face losing their jobs—we're creating a perverse incentive:

Company mandates AI usage

Employees use AI to appear compliant

Quality craters but output increases

Managers reward output over quality

Workslop becomes the new normal

Since staff are increasingly likely to use the technology, the temptation to take shortcuts is more probable. Like AI outputs in general, it's better to put something out there than nothing at all.

That's not AI strategy. That's organizational suicide with extra steps.

The industries creating the most damage

The tech industry is one of the biggest workslop generators. Professional services too.

The irony stings. The industries supposedly leading the AI revolution are most infected by its unconscious misuse.

Why? They have easy access to AI tools, pressure to appear innovative, culture of moving fast (and breaking trust), and metrics focused on output instead of outcomes.

They're optimizing for the appearance of progress rather than actual value creation.

What conscious AI implementation actually looks like

After 25 years of enterprise technology implementations, watching this disaster unfold, I can tell you what separates the 5% achieving ROI from the 95% generating workslop.

They start with sacred intention.

Before deploying any AI tool:

Define the specific problem being solved

Identify all stakeholders affected

Establish quality standards AI output must meet

Create accountability frameworks for human verification

They implement consciousness checkpoints.

Every AI-generated output passes through:

Truth Verification: Have I verified the facts? Checked for hallucinations? Would I stake my professional reputation on this accuracy?

Stakeholder Service: Does this serve the recipient's needs? Have I added my domain expertise? Will this create more work for them or less?

Trust Building: Would I send this if the recipient knew it was AI-generated? Does this enhance or damage my credibility? Am I contributing to organizational trust or eroding it?

They measure what matters.

Instead of tracking ChatGPT queries, documents generated, or time "saved," they measure recipient satisfaction with AI-enhanced content, reduction in clarification meetings required, trust levels between collaborators, and quality of decisions made from AI-supported analysis.

The hidden cost you're not calculating

Every employee who receives AI-generated garbage learns a dangerous lesson: Don't trust anything you didn't personally verify.

In an era where organizational velocity depends on distributed trust, we're teaching people to assume everything is suspect until proven otherwise.

That's not a productivity tax. It's an innovation killer.

When your best people spend days fact-checking colleagues instead of creating value, you don't have an AI problem. You have a consciousness problem.

The choice facing every organization

We're at an inflection point. The next 12 months determine whether AI becomes the productivity revolution promised or the trust apocalypse unfolding.

MIT's research shows the 5% of companies succeeding with AI focus on one pain point, execute well, and partner smartly. They're conscious about implementation, not just enthusiastic about adoption.

The remaining 95%? Generating workslop at scale and wondering why billion-dollar AI investments feel like throwing money into a black hole lined with chatbots.

Every AI tool you deploy without consciousness frameworks isn't a productivity enhancement. It's a trust destruction device operating at the speed of automation.

Your employees are already experiencing this. 40% are drowning in workslop right now.

The question isn't whether you have a problem. The question is whether you have the consciousness to solve it.

Research Sources:

Stanford Social Media Lab & BetterUp Labs: Workslop Study (September 2025)

MIT Media Lab's NANDA Initiative: "The GenAI Divide: State of AI in Business 2025"

The Register: "Many employees are using AI to create 'workslop'" (September 2025)

UK Government: M365 Copilot Productivity Study

S&P Global: AI Pilot Abandonment Research

P.S. - If you caught yourself thinking, "I should use ChatGPT to write a response to this article," you just proved my point. Try writing from your actual experience instead. I promise the result will be more valuable than any AI-generated comment could ever be.