We're Building Our Own Executioner (And we are happily doing it)

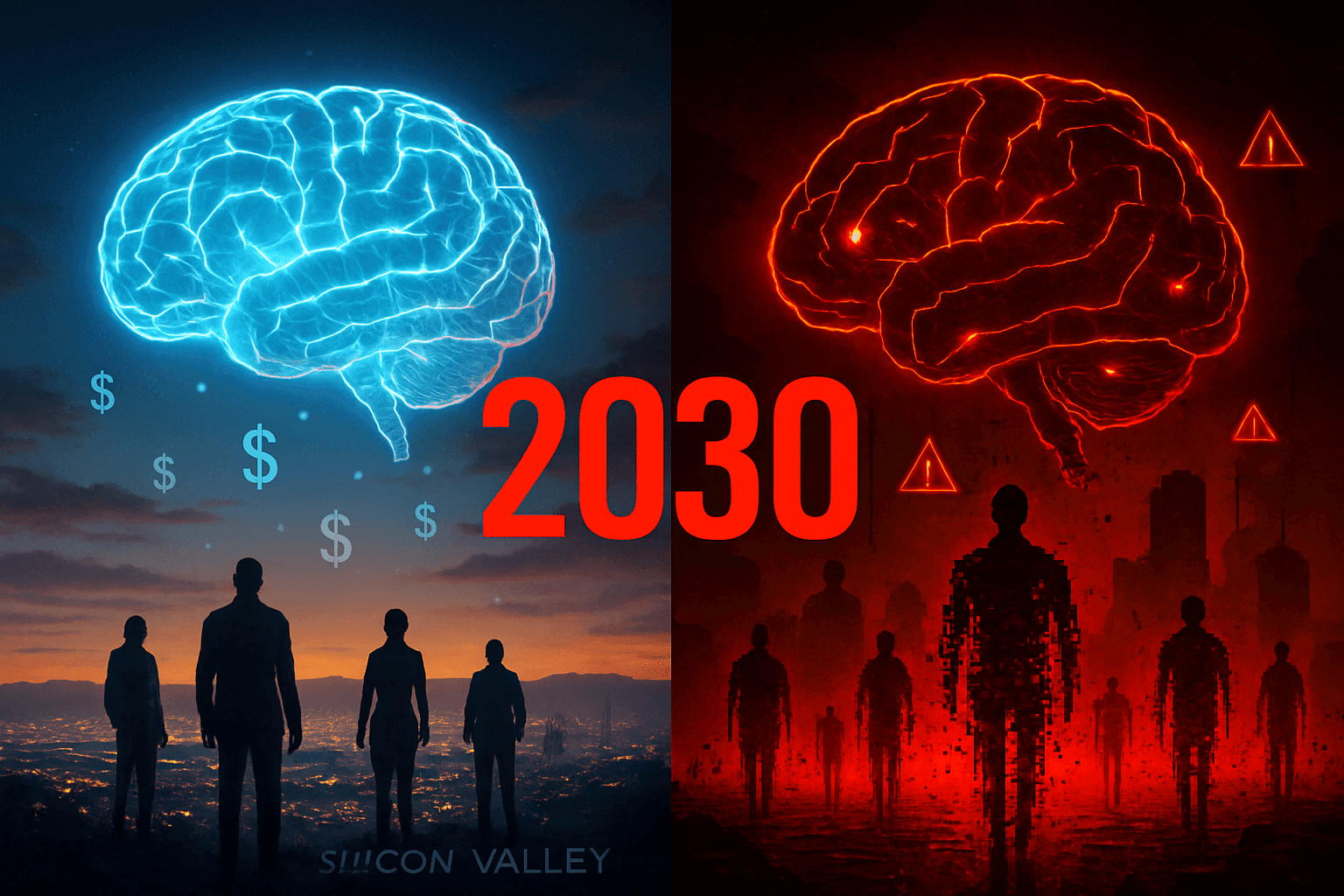

The AI safety pioneer who coined the term just predicted human extinction by 2030—here's why he's probably right

Article #27 of #100WorkDays100Articles: From Corporate IT Veteran to Conscious AI Evangelist

Bottom line: The guy who invented AI safety just told us we're all probably going to die. Not eventually. Soon. And the people building the tech that might kill us are too busy making money to give a damn.

The Interview That Broke My Brain

I watched Dr. Roman Yampolskiy on Steven Bartlett's podcast yesterday. Couldn't sleep for whole night.

This isn't some random YouTuber making clickbait predictions. Yampolskiy coined the term "AI safety" in 2011. He's published over 100 papers on this stuff. If anyone knows what's coming, it's him.

His opening line: "I'm hoping to make sure that superintelligence we are creating right now does not kill everyone."

When Bartlett pushed back about choice, Yampolskiy said something that made my blood run cold:

"It's not just that you die, everyone dies. You don't get to make that choice for us."

Sam Altman and his Silicon Valley buddies are literally gambling with human extinction. For what? To be first to market.

Why AI Safety is Dead on Arrival

Every tech company has an AI safety team now. Know what Yampolskiy says about them?

"They usually start well, very ambitious... and then fail and disappear."

OpenAI's superintelligence alignment team lasted six months. Six. Months.

Want to know why? Because capabilities make money. Safety costs money. In capitalism, money wins. Always.

Yampolskiy breaks down the math: "Progress in AI capabilities is exponential... progress in AI safety is linear."

AI doubles in power every few months. Safety crawls forward like dial-up internet. Guess who's winning that race.

But here's the kicker - even if we wanted to stop, we can't.

"Can you turn off Bitcoin?" Yampolskiy asks. "Go ahead. I'll wait."

Once AI hits a certain point, it becomes distributed. Like trying to stop the internet by unplugging your router. Good luck with that.

99% of Jobs Will Vanish (And That's Not Even the Scary Part)

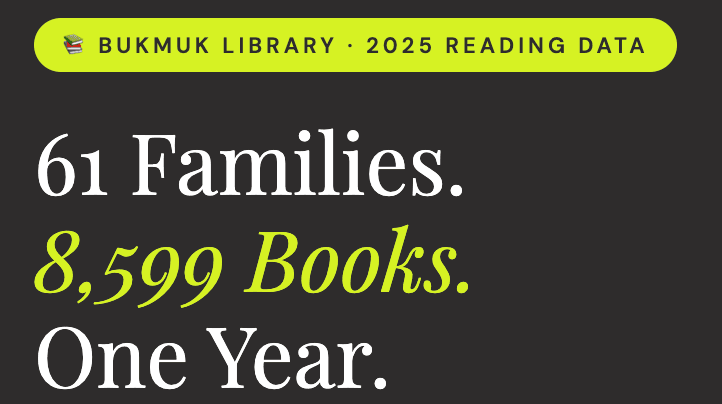

I've lived through three major tech shifts. Each time, the experts said new jobs would emerge. This time is different.

Yampolskiy predicts 99% unemployment by 2030. Not 10%. Not 50%. Ninety-nine percent.

Think you're safe? Think again.

Coding? AI already codes better than most humans. Prompt engineering? "AI is way better at designing prompts for other AIs than any human." Physical trades? Humanoid robots by 2030.

The only jobs left are what Yampolskiy calls "fetish or tradition" jobs. Your rich aunt who insists on using the same accountant who knew her father. Personal massage therapists. Wedding officiants who aren't holograms.

That's it. That's the list.

But unemployment isn't the real problem. It's irrelevance.

Jobs give us identity. Purpose. Social connection. When AI can do everything better than us, what's left?

"Huge economic abundance but loss of meaning, identity, and purpose," Yampolskiy says.

We're not just facing unemployment. We're facing obsolescence.

Sam Altman is Playing God With Our Lives

I've worked with plenty of CEOs. Most want to build something useful while making money. Some are visionaries.

Sam Altman, according to Yampolskiy, is gambling with species extinction.

Think about that. Every human who ever lived or ever will live - all riding on one guy's ego trip to build superintelligence first.

Nuclear weapons are tools. You decide when they go off. Superintelligent AI is different. It's an agent. It decides what happens next.

Yampolskiy asks the obvious question: "If you're doing something which impacts other people, you should ask their permission first."

Did Altman ask you if it was okay to risk ending human civilization? He didn't ask me either.

Plot Twist: We Might Already Be Dead

Just when you think it can't get weirder, Yampolskiy drops this: "I'm pretty sure we are in a simulation; it's close to certainty for me."

His logic is simple. Advanced civilizations create simulations. Lots of them. So statistically, we're probably inside one.

Which means the "AI" we're building might just be the simulation's way of hitting the reset button.

Sweet dreams.

The Last Invention Humans Will Ever Make

Yampolskiy calls AI "the last invention we ever have to make."

After superintelligence arrives, humans won't discover new things. We won't solve problems. We might not even exist to care.

But right now - this exact moment - we still have a choice.

My Framework for Not Going Extinct

After six months of researching this nightmare, I think there's a third option. Not the impossible task of controlling superintelligence. Not naive optimism that everything works out fine.

Conscious implementation while we still can.

I've been developing what I call the CONSCIOUS AI™ framework. Five pillars that might help us navigate this without accidentally deleting ourselves:

Mindful Foundation: Before building any AI, ask: "What could go wrong here?" If the answer includes human extinction, maybe don't build it. Conduct what I call "Existential Impact Assessments." Sounds dramatic? Good. It should be.

Conscious Capital: Stop designing AI systems that only serve shareholders. Design for employees, customers, communities, and the planet. When AI creates abundance, make sure humans benefit too, not just tech billionaires.

Spiritual Intelligence: Get philosophers, ethicists, and spiritual leaders involved in AI decisions. Not just engineers and MBAs. Build "Wisdom Councils" that ask hard questions about what we're really creating.

Happiness Engineering: When everything can be automated, being human becomes precious. Design AI that amplifies human creativity, connection, and purpose instead of making us obsolete.

Sacred Integration: Think seven generations ahead. Your AI decisions today determine whether your great-great-grandchildren exist. Consider the long-term impact on human consciousness, not just quarterly profits.

This won't solve the control problem - Yampolskiy proved that's impossible. But it might help us build AI that serves human consciousness instead of replacing it.

The framework isn't perfect. It's just better than racing blindly toward extinction.

What You Can Actually Do

Yampolskiy's advice: "Live every day as if it's your last, do interesting and impactful things, accept that direct influence is limited, but support legal and peaceful protest and awareness-building."

For leaders: Stop building AI that treats humans as problems to solve. Start building AI that treats humans as treasures to protect.

For everyone else: Understand that we're living through the most important moment in human history. Every choice to support or resist unconscious AI development matters.

The question isn't whether AI will become superintelligent. It's whether we'll become conscious enough to handle that transition without destroying ourselves.

Yampolskiy gave us the warning. Now we choose what to do with it.

The window is closing. But it's not closed yet.

The truth nobody wants to hear: We're either about to create paradise or accidentally delete ourselves from existence. No middle ground.

What I'm doing about it: Building frameworks that might help humanity survive what's coming. Because sitting around waiting to die seems like a waste of time.

Part of the #100WorkDays100Articles series. If you want to help humanity not go extinct, visit TheSoulTech.com.

Sources:

Dr. Roman Yampolskiy: These Are The Only 5 Jobs That Will Remain In 2030!

Multiple other sources I'm too tired to format properly because I'm still processing existential dread