The Great Agentic Chess Match

How Tech Giants Are Playing for the Future (And We're Not Even Watching)

Day 24 of #100WorkDays100Articles

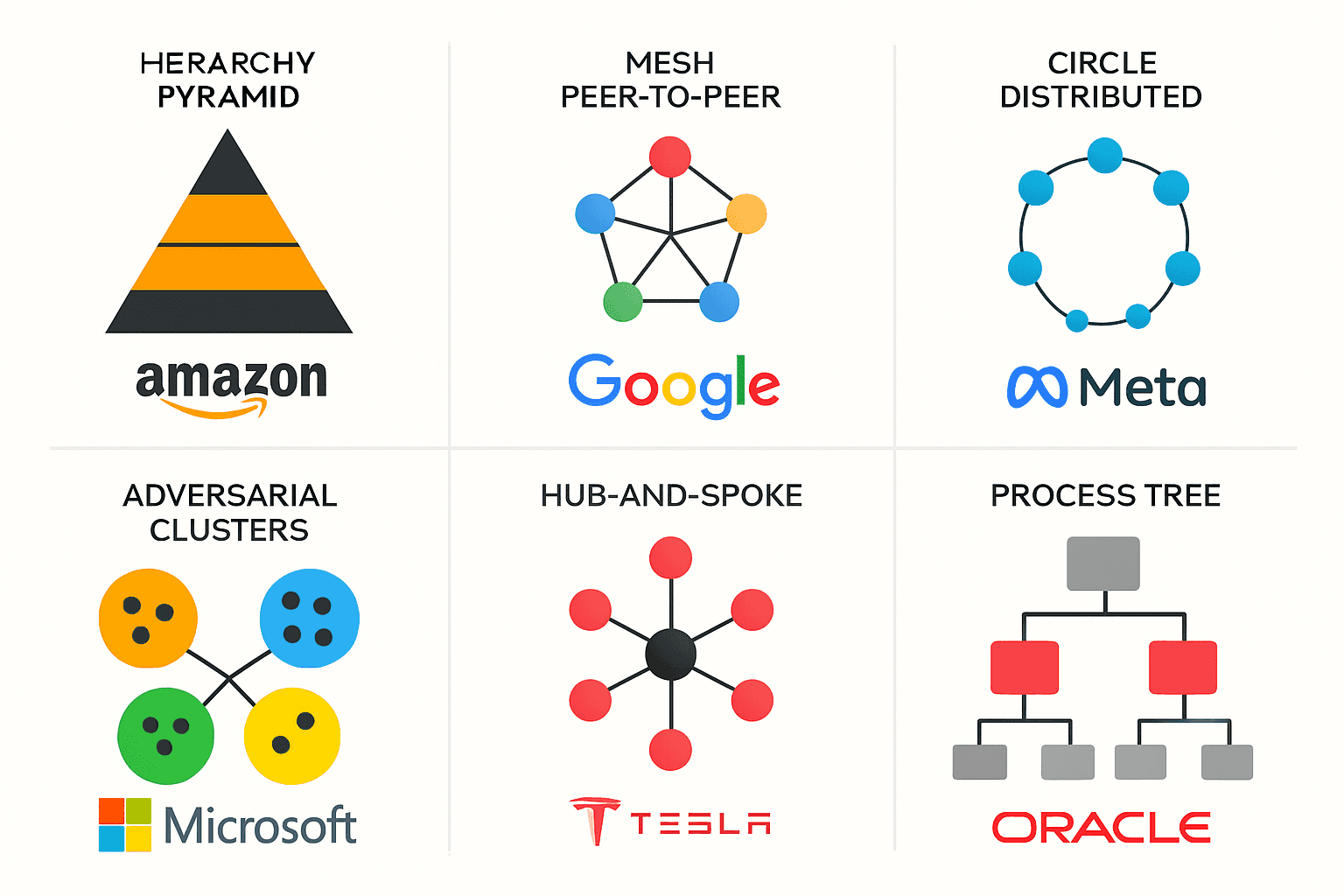

Sarah sent me this image yesterday at 10:54 PM from our doctoral program group chat. Six diagrams showing how Amazon, Google, Meta, Microsoft, Tesla, and Oracle think AI agents should talk to each other.

I've been staring at it for 18 hours.

Not because it's technically complex. Because it's a psychological profile of Silicon Valley's power structure, disguised as software architecture.

What Are AI Agents, Actually?

Before we dive into these corporate chess moves, let's get clear on what we're talking about.

Traditional AI responds to prompts. You ask, it answers, conversation over.

AI agents are different. They're autonomous software systems that can:

Reason through multi-step problems

Plan sequences of actions to achieve goals

Use tools like APIs, databases, and external services

Maintain memory across interactions and sessions

Learn from outcomes to improve future performance

Think of them as digital employees that never sleep, never take breaks, and never forget what you taught them last month.

The game-changer? Multi-agent systems where hundreds or thousands of these digital employees collaborate, compete, and coordinate to solve problems no single agent could handle alone.

That's what these six diagrams represent: six different philosophies for organizing AI workforces.

Amazon's Hierarchical Command Structure

Of course they built a pyramid.

Look at that top-left diagram. It's a classic master-slave architecture disguised as "AgentCore." Higher-level agents decompose complex tasks and assign them to subordinate agents. Each subordinate runs independently but reports progress back up the chain.

Technically, this uses Hierarchical Task Networks (HTNs) with centralized orchestration. The master agent maintains a global state store while worker agents operate in isolated execution contexts with session-level security boundaries.

The genius? Complete audit trails. Every decision, every delegation, every failure maps back to a specific agent in the hierarchy. Amazon's betting that enterprises will pay premium prices for explainable autonomy.

But here's the technical trade-off nobody mentions: hierarchical systems create single points of failure at every management layer. When your orchestrator agent crashes, every subordinate becomes an orphaned process.

Google's Peer-to-Peer Mesh Network

That top-right diagram shows distributed consensus in action. No central authority. Every agent can talk to every other agent through bidirectional communication channels.

Technically, they're implementing Agent2Agent (A2A) protocol on top of Model Context Protocol (MCP). Think of it as GraphQL for AI agents - each agent exposes a schema of capabilities, and other agents can discover and invoke them dynamically.

The communication flow works like this:

Agent Discovery: Agents broadcast capability advertisements

Capability Negotiation: Requesting agent queries available functions

Task Delegation: Work distributed based on agent specialization

Result Aggregation: Responses collected and synthesized

It's beautiful. It's also Byzantine Fault Tolerance in practice - the system keeps working even when individual agents fail or behave maliciously.

But peer-to-peer systems have a dirty secret: consensus is expensive. Every major decision requires multiple agents to agree. That's a lot of network chatter and computational overhead for simple tasks.

Meta Pretends They're Not Facebook

That bottom-left diagram shows "peer-to-peer agents" because Meta desperately wants you to forget they built their empire on surveillance capitalism.

But peer-to-peer systems are hard. Really hard. They require trust mechanisms, conflict resolution, and consensus algorithms. Facebook couldn't even figure out content moderation with humans.

Good luck with AI democracy, Mark.

Microsoft's Adversarial Multi-Agent System

Those adversarial clusters reveal Microsoft's deep understanding of competitive cooperation.

This isn't just multi-agent - it's adversarial multi-agent reinforcement learning. Different agent clusters compete for resources while collaborating toward shared objectives. Think game theory meets distributed computing.

The technical implementation uses multi-objective optimization where agents have competing goals:

Sales agents optimize for revenue

Legal agents optimize for compliance

Security agents optimize for risk reduction

Customer service agents optimize for satisfaction

The magic happens in the conflict resolution layer - a meta-agent that mediates between competing objectives using Pareto optimization. Instead of choosing winners and losers, the system finds solutions that improve multiple objectives simultaneously.

Microsoft's insight: productive tension creates better solutions than forced harmony. Their Semantic Kernel orchestrates these conflicts through plugin-based architecture where each agent cluster can leverage specialized tools while maintaining competitive autonomy.

Tesla's Hub-and-Spoke Master Agent

That hub-and-spoke model is centralized intelligence with distributed execution. One master agent connected to specialized worker agents through high-bandwidth communication channels.

Technically, this is hierarchical reinforcement learning with a twist - the master agent doesn't just assign tasks, it learns from every worker interaction. Tesla's using federated learning principles where edge agents (the spokes) contribute training data back to the central model.

The architecture enables real-time coordination across autonomous systems:

Perception agents process sensor data

Planning agents optimize trajectories

Control agents execute motor commands

Learning agents update models based on outcomes

The master agent runs attention mechanisms to dynamically focus computational resources. When driving conditions change, it can instantly reallocate processing power from less critical systems to emergency response.

But here's the technical risk: single point of cognitive failure. If the master agent makes a bad decision, every connected system executes that bad decision simultaneously. At 70 mph, you don't get a second chance.

Oracle's Specialized Process Agents

While everyone else builds general intelligence, Oracle builds domain-specific expert systems.

That legal agents diagram shows workflow automation with specialized knowledge graphs. Each agent is a fine-tuned language model trained on specific document types:

Contract analysis agents understand legal terminology embeddings

Invoice processing agents handle structured data extraction

Compliance agents maintain regulatory rule databases

The technical architecture uses microservices patterns where each agent is a containerized service with its own knowledge base and inference engine. Agents communicate through REST APIs with JSON schema validation.

Oracle's insight: narrow intelligence beats general intelligence for repetitive business processes. Their agents don't need to understand everything - they need to understand one thing perfectly.

The implementation leverages template-based reasoning where agents match incoming documents against pre-trained patterns. When confidence scores drop below thresholds, agents escalate to human experts through human-in-the-loop workflows.

It's boring. It's reliable. It processes millions of documents without hallucinating or getting creative with contract interpretations.

The Technical Consciousness Question

Each architecture embodies different approaches to human-AI collaboration patterns:

Amazon's hierarchy implements human-supervisory control - humans maintain authority through management layers, but risk automation bias where humans over-trust automated decisions.

Google's mesh network enables human-AI teaming through collaborative filtering - humans and agents vote on decisions, but consensus mechanisms can be gamed by adversarial agents.

Microsoft's adversarial system uses human-mediated competition where conflicts escalate to human arbitrators, but requires sophisticated multi-criteria decision analysis frameworks.

Tesla's centralized model relies on human-validated autonomy - humans verify master agent decisions, but cognitive load increases exponentially with system complexity.

Oracle's process agents implement human-exception handling - agents handle routine cases, humans handle edge cases, but boundary detection between routine and exceptional is non-trivial.

The Protocol Wars Beneath the Surface

These aren't just architectural differences. They're battles over communication standards:

Google's A2A protocol vs. Anthropic's Model Context Protocol (MCP) vs. Microsoft's Semantic Kernel plugins vs. Amazon's AgentCore APIs.

The winner determines how AI agents talk to each other for the next decade. That's vendor lock-in disguised as interoperability standards.

Which of these philosophies do you want running your business?

Not which one is most technically sophisticated. Not which one has the best marketing. Which one aligns with how you actually think humans and machines should work together?

Because that's what you're choosing when you pick an AI architecture.

You're not just buying software. You're buying a worldview.

Why This Matters More Than You Think

I spent two decades watching companies adopt technology based on features and pricing.

Nobody ever asked: "Does this reflect our values?"

Nobody ever asked: "What kind of organization does this technology want us to become?"

Nobody ever asked: "Are we choosing this because it's right, or because everyone else is choosing it?"

Now we're making the same mistake with AI agents.

We're optimizing for capability and cost. We're not optimizing for consciousness.

The Real Choice

These six diagrams represent six different futures for human-AI collaboration.

Hierarchy or emergence. Control or chaos. Conflict or consensus. Centralization or distribution. Innovation or reliability.

We're sleepwalking into one of these futures based on which company has the best sales team.

That's insane.

We should choose consciously. Based on what kind of world we want to build.

Not what kind of quarterly results we want to deliver.

What I'm Building Instead

None of these architectures ask the right question: "How do we build AI systems that make humans more human?"

They're all optimizing for efficiency, scalability, or competitive advantage.

I'm building frameworks that optimize for human flourishing.

It's slower. It's messier. It's less profitable in the short term.

It's also the only approach that makes sense if you think consciousness matters more than quarterly earnings.

Which diagram scares you most? Which one excites you? Your answer says more about your organization's future than any strategic plan.