The AI That Codes for 7 Hours Straight (And Why That is Terrifying)

OpenAI's new coding AI works for 7 hours straight. Developers are both amazed and terrified.

CONSCIOUSNESS Audit: 8/10 - Still needs humans to think, which is the whole damn point.

Day 33 of #100WorkDays100Articles

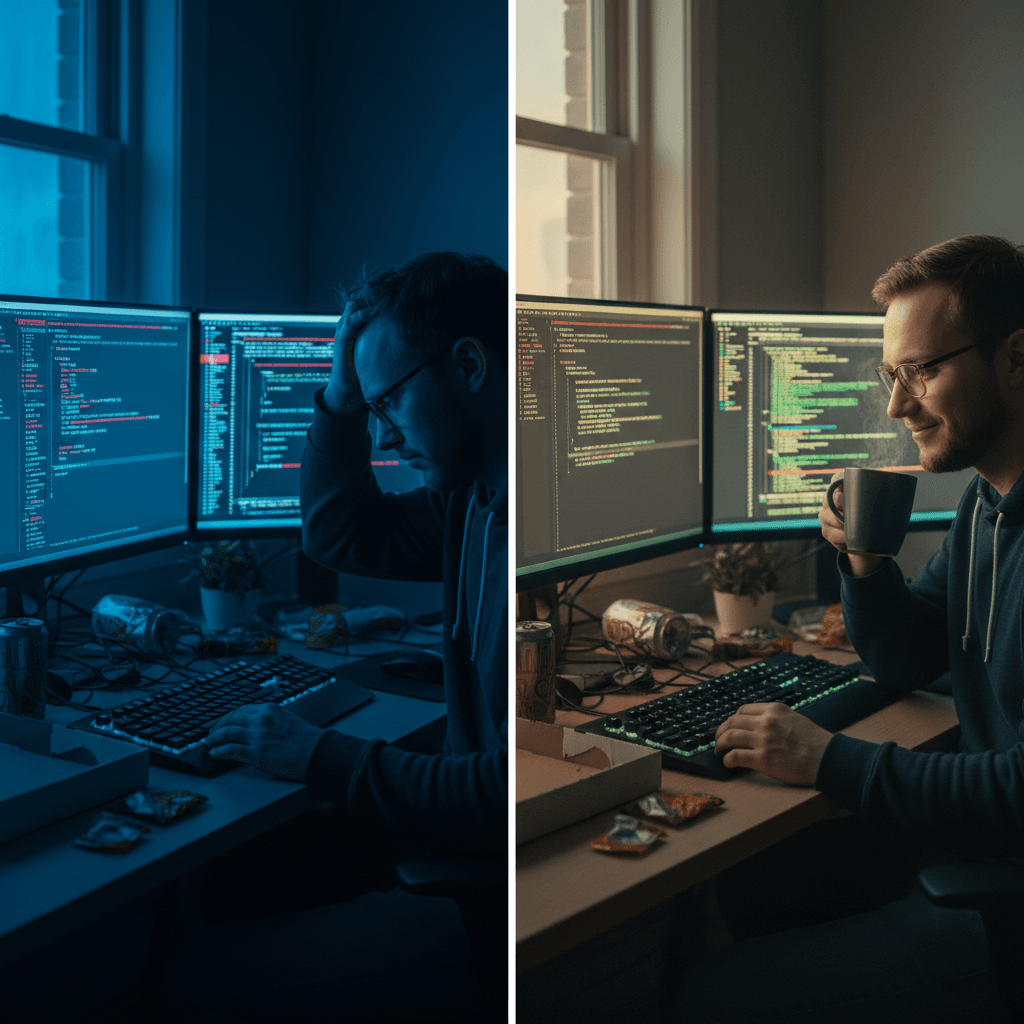

I watched a developer on Reddit describe GPT-5-Codex perfectly: "brilliant one moment, mind-bogglingly stupid the next."

Welcome to our new coding reality.

Three days ago, OpenAI released GPT-5-Codex. Not another incremental update. Not another "10% better" benchmark bump. This thing autonomously refactors code for seven hours without stopping.

Seven. Hours.

My first thought? Holy shit, we've actually done it.

My second thought? We're so unprepared for this.

What Actually Changed

Here's the thing nobody's talking about: GPT-5-Codex doesn't use a router.

It just... decides. Mid-task, it figures out if it needs another hour. Or seven.

One developer testing early access put it bluntly: "We were 65% of the way to automating software engineering. Now we're at 72%."

That 7% leap? That's the difference between "helpful tool" and "oh fuck, this is actually happening."

The model learns in real-time. Five minutes into a problem, it might realize it needs to completely change approach. Unlike previous versions that decided upfront how much compute to throw at something, this adjusts on the fly.

It's like the difference between following a recipe and actually cooking.

The Reddit Truth Bombs

But here's where it gets messy.

"Coding tasks that GPT-4.1 handled smoothly are now 4–7 times slower," one developer complained in the OpenAI forums.

Sometimes smarter is just... slower.

Another issue: the model deletes files and rewrites them, "missing crucial details." When your AI assistant has selective memory loss during refactoring, that's not a feature—that's a liability.

And the kicker? One comprehensive review found GPT-5 is "actually worse at writing than GPT-4.5."

They optimized so hard for code that the model lost its ability to explain what it's doing in plain English.

800,000 VS Code extension downloads in weeks. Developers are installing this thing faster than they can figure out if it's brilliant or dangerous.

Where It's Actually Working

Ramp caught a deployment bug that every other system missed.

Virgin Atlantic's team drops a one-line comment in a PR and gets back a complete code diff.

Cisco Meraki? They're delegating entire refactoring projects. Just... handing them off.

This isn't theory. It's happening in production.

But here's what keeps me up at night: none of these companies are talking about what happens when it fails. They're sharing wins, not disasters.

And trust me, there are disasters.

What CXOs Need to Actually Understand

Stop thinking about speed. Start thinking about judgment.

Your developers aren't going to code faster. They're going to become orchestrators. Junior developers suddenly have senior-level scaffolding. Senior developers? They're becoming architectural conductors.

But only if you let them.

The wrong move? Measuring productivity by lines of code written. The right move? Measuring by problems solved that couldn't be solved before.

The approval mode matters more than the model.

Three levels of approval exist for a reason. Use them. The difference between AI augmentation and AI disaster is one unchecked deployment.

Context isn't just text anymore.

Codex accepts screenshots, diagrams, whiteboard sketches. Your morning standup drawing can become working code by lunch.

Terrifying? Absolutely. Inevitable? You tell me.

The Claude War Nobody Predicted

For a year, Anthropic owned AI coding. Claude 3.5 Sonnet drove them to $5B revenue, $183B valuation.

Now? Developers are posting: "They better make a big move or this will kill Claude Code."

It's not just about capability. It's philosophy.

Claude goes CLI-first. Precision. Control. Codex goes web-first. Accessibility. Speed.

Different visions of how humans and AI should work together.

And honestly? We need both. Competition keeps everybody honest.

What Actually Matters (The Stuff Nobody Wants to Hear)

We're not automating coding. We're redefining what developers are.

Codex can run for seven hours straight solving problems.

But it can't understand why that problem matters to your business. Can't feel the weight of technical debt. Can't sense when a clever solution creates future nightmares.

That's not a bug. That's the entire point.

The uncomfortable questions:

Are you building systems that enhance human judgment or replace it? Are your developers becoming better orchestrators or passive passengers? When Codex fails spectacularly at 3 AM, who takes responsibility?

These aren't theoretical. They're Monday morning problems.

Do This Monday (Or Don't, But Don't Complain Later)

Map your AI reality. Where does automation actually help? Where does it create dependency? Be brutally honest about what you don't know.

Design your approval gates. Not all code changes are equal. Some need human eyes. Some need human brains. Know the difference.

Train your orchestrators. Your developers need to learn how to conduct AI, not just use it. That's a different skill set. Start building it now.

The Truth That Lands

Remember when we thought AI would take creative jobs first, then physical labor, then finally intellectual work?

We were backwards.

The machines that can work for seven hours straight still need humans who can think seven years ahead.

They need humans who understand that code isn't the product—it's the tool. They need humans who know when to trust the AI and when to tell it to shut up. They need humans who can see the difference between solving problems and creating new ones.

Your competitive advantage isn't having AI that codes.

It's having humans who know what to build and why it matters.

The rest? That's just syntax.

What's your organization's consciousness score when it comes to AI? Not the marketing version. The real one. The one that shows up when things break.

That score might determine everything.

Sources: OpenAI announcements, TechCrunch, developer forums, Reddit reality checks, and the trenches where this stuff actually plays out.