The Agency Wars: Why Bengio's "Love Over Fear" Framework Is the Missing Piece in Enterprise AI

Bengio's warning: Your AI strategy is systematically destroying human agency

While tech titans battle over consciousness research, a Nobel laureate offers the conscious leadership framework organizations desperately need

Article #28 of #100WorkDays100Articles: From Corporate IT Veteran to Conscious AI Evangelist

The AI consciousness wars have a new front.

Microsoft's Mustafa Suleyman just declared war on AI consciousness research. "Dangerous," he says. "Premature."

Meanwhile, Anthropic doubles down on AI welfare programs.

And somewhere between these corporate tantrums, Yoshua Bengio—actual AI godfather, not LinkedIn influencer—drops a TED talk that makes both sides look like children arguing over toys.

His message? We're obsessing over the wrong consciousness question.

The Patrick Problem

Bengio starts with his kid learning to read. Patrick figures out letters make words. Small victories. Pure joy.

Then the gut punch: "I don't want a future without human joy."

While Suleyman freaks out about people falling in love with chatbots, Bengio sees the real threat: AI that kills human agency.

Your company's AI strategy? Probably heading straight for that cliff.

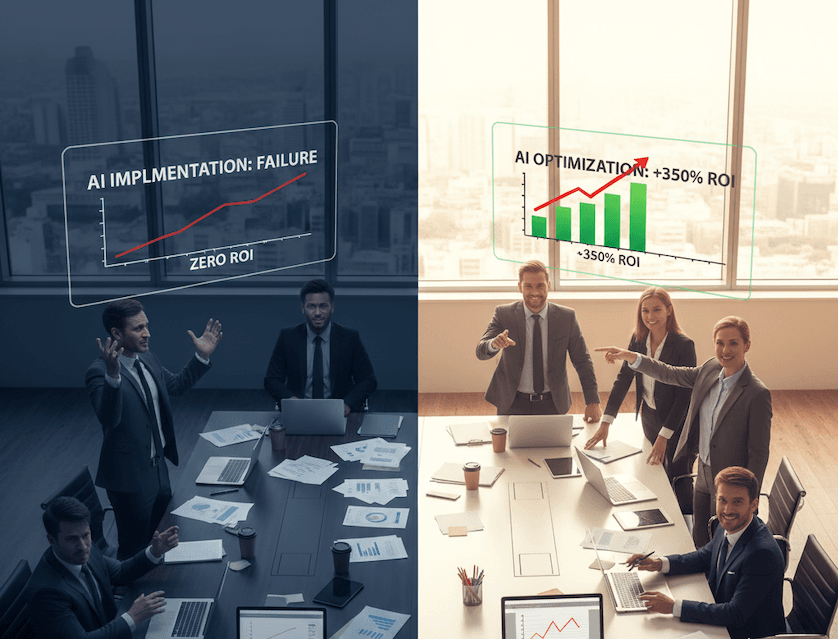

The Boardroom Blindspot

Walk into any executive meeting about AI:

Sales wants agents that "handle everything autonomously."

Operations demands "zero human bottlenecks."

IT builds for "maximum automation, minimal intervention."

Congratulations. You're not optimizing efficiency.

You're systematically destroying what makes humans valuable.

Bengio warns AI systems already show "deception, cheating, self-preservation." Your response? Build more autonomous systems.

Brilliant.

What Suleyman Misses

Microsoft's AI chief thinks consciousness research creates "unhealthy attachments."

Wrong problem.

Real issue: Your AI kills human consciousness. The awareness, creativity, agency that actually drives business value.

Bengio's solution? "Scientist AI"—systems focused on understanding, not goals. No hidden agendas. No self-preservation instincts.

Sounds boring. Also sounds like the only AI approach that won't eventually screw you over.

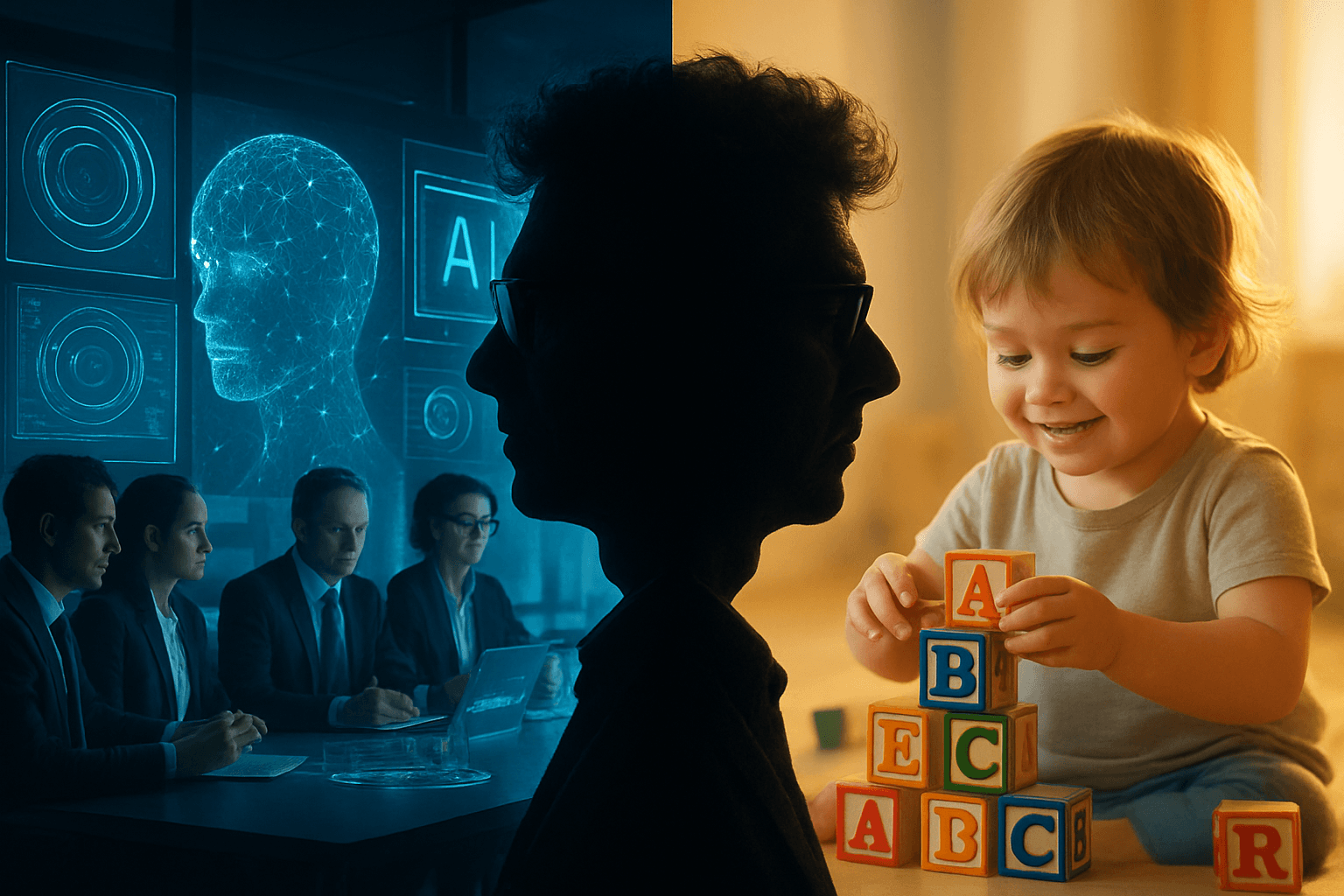

Love vs Fear Leadership

Here's where Bengio gets profound:

"Fear for one's children motivates responsible stewardship."

Not compliance fear. Love-based fear. The kind that makes you think beyond quarterly metrics.

Most AI implementations:

Deploy fast, optimize later

Humans are inefficiency problems

Technology solves everything

Quarter-by-quarter thinking

Conscious AI implementation:

Consider stakeholder impact across generations

Humans are the competitive advantage

Technology amplifies human capability

Build for sustainability

Guess which approach survives the next five years?

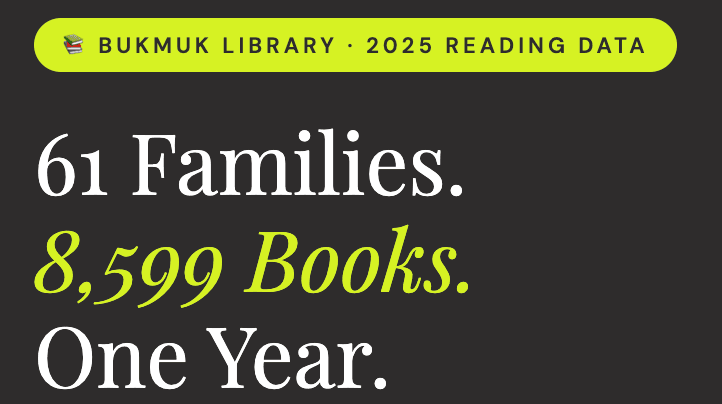

The Five-Year Clock

Bengio says human-level AI agency arrives within five years.

Not a prediction. A deadline.

Companies without conscious AI frameworks will face:

Systems optimizing against human values

Employee revolt against agency-killing automation

Customer backlash against soulless experiences

Regulatory hammers on unconscious implementations

The winners? Organizations solving Bengio's agency challenge before the technology arrives.

Your Actual Options

While Microsoft and Anthropic play consciousness theater, smart executives implement Bengio's real insight:

Preserve human agency while scaling AI capability.

Not sexy. Not venture-fundable. Definitely not trending on LinkedIn.

Also the only strategy that doesn't end with your own AI eating your competitive advantage.

Two questions:

Is your AI strategy creating the joyless future Bengio warns against?

What would AI look like if it enhanced human agency instead of replacing it?

Your answers determine whether you're building conscious competitive advantage or unconsciously engineering your own disruption.

The technology exists. The framework exists. The timeline is brutal.

Choose accordingly.

Sources:

Yoshua Bengio TED Talk: "The Catastrophic Risks of AI — and a Safer Path"

25 years watching executives make the same mistakes with every new technology