What Papa's Broken Scooter Taught Me About AI Architecture

How a Delhi garage taught me what Silicon Valley is just figuring out

And why does that matters more than all the technical papers combined

Day 31 of #100WorkDays100Articles series

Growing up in a middle-class Indian household, our garage wasn't a garage. It was everything else.

One corner had my dad's "tool collection"—a rusty toolbox, some bamboo poles, electrical wires tangled like spaghetti, and this ancient Bajaj scooter that broke down more often than it ran.

But here's what amazed me: whenever something broke in our house (which was often), Papa would disappear into that chaos for exactly two minutes and emerge with the perfect solution.

Kitchen tap leaking? He'd come back with some rubber gasket he'd saved from fixing the neighbor's pressure cooker three years ago.

Scooter making that weird rattling sound? He'd grab this specific wrench that looked identical to five other wrenches, but somehow this was "the one for engine bolts."

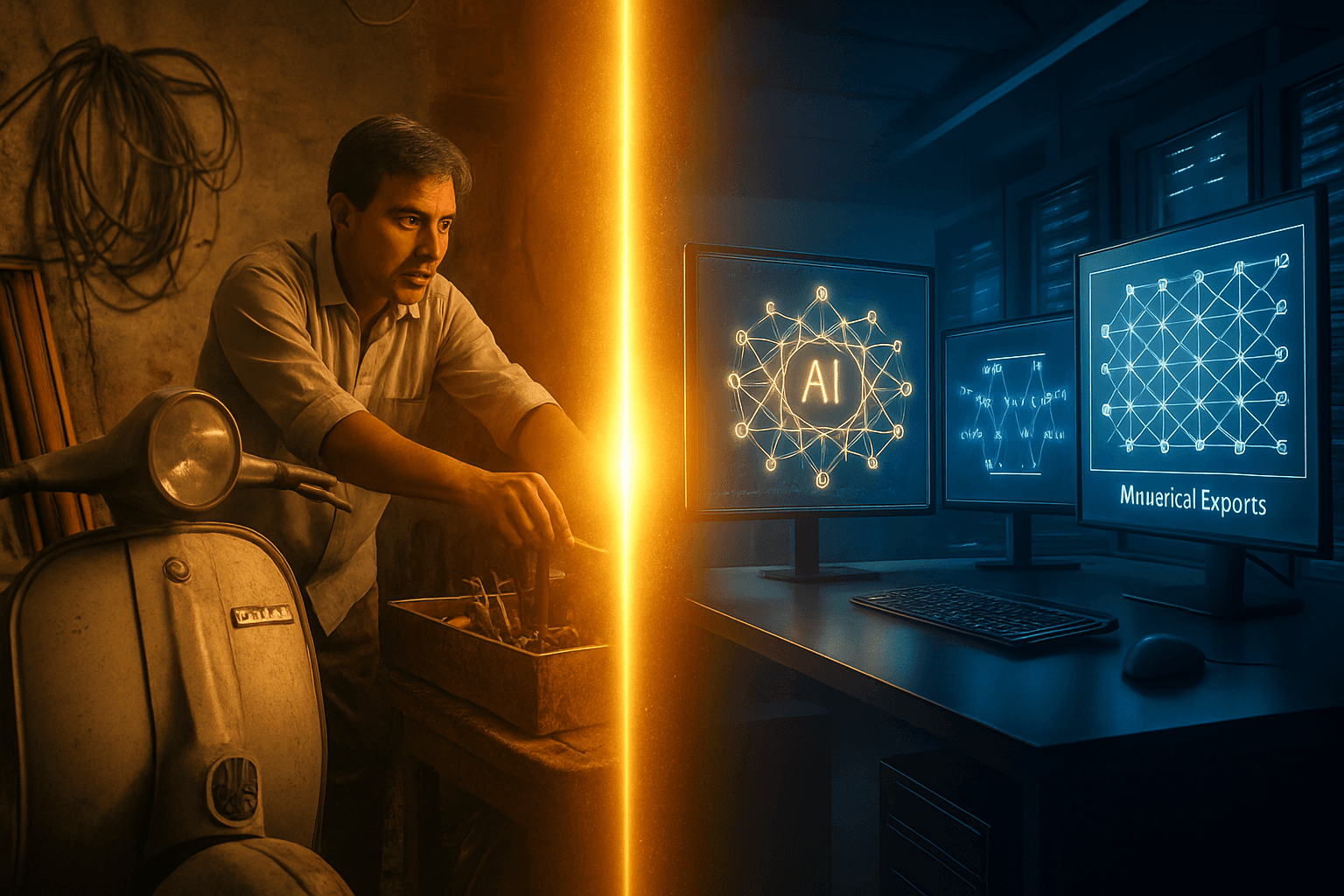

Last week, I'm reading another research paper about Mixture of Experts, and it hits me. We've been trying to solve with fancy AI what my papa figured out decades ago in our cramped Ghaziabad garage:

Don't make one tool do everything. Have the right specialist for the job.

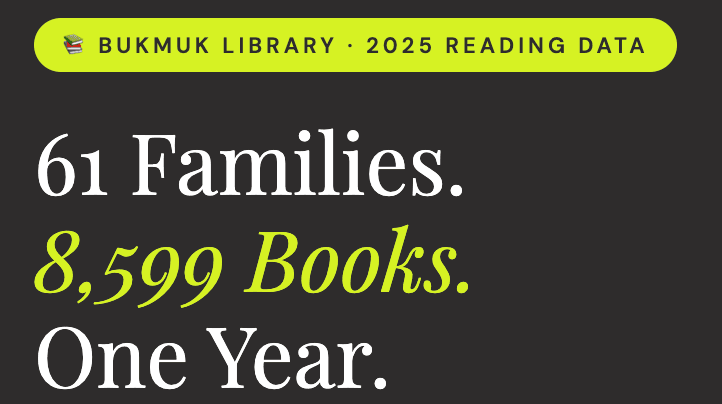

The Problem I Keep Watching People Create

Every few months, some executive calls me up. Same conversation, different company.

"We implemented this amazing AI system. Cost us a fortune. Supposed to handle everything—customer service, sales analysis, HR screening, you name it. Know what happened?"

I always know what happened. "It's mediocre at everything?"

"Exactly. It's like hiring one person to be your accountant, salesperson, AND therapist."

This is what I call the Swiss Army Knife Problem. Yes, it has every tool. No, none of them work as well as the specialized version.

For twenty-five years, I watched companies build these massive, do-everything systems. ERP systems that tried to handle manufacturing, accounting, HR, and customer service. Always the same result: expensive mediocrity.

What MoE Actually Is (Without the Jargon)

Mixture of Experts is dead simple in concept:

Instead of one giant AI brain trying to handle everything, you have a bunch of smaller, specialized AI brains. Plus one smart coordinator that knows which specialist to call for each job.

Think about how a good hospital works:

You don't see a brain surgeon for a broken finger

You don't see a pediatrician for your heart surgery

But someone smart (triage nurse) figures out where you need to go

That's it. That's MoE.

The technical stuff:

Experts: Specialized mini-models, each good at specific things

Router: The smart coordinator that decides which expert(s) to use

Sparsity: Only 2-3 experts work on any given problem

Result? Mixtral 8x7B only uses about 13 billion parameters for each task, even though it has 47 billion total. Six times faster than the equivalent "do everything" model.

Why This Hits Different

Here's what the research papers don't tell you, and what my doctorate work is revealing:

This isn't just about efficiency. It's about consciousness.

Every time I see MoE working well—whether it's AI models or human teams—the same pattern emerges:

Each specialist knows their lane and stays in it

There's wisdom in the coordination (not just rules)

The system gets better at knowing what it doesn't know

My dad wasn't just organized. He understood something profound: Specialization without coordination is chaos. Coordination without specialization is mediocrity.

The sweet spot? Specialized expertise with conscious coordination.

The Day Everything Clicked

Two months ago, I'm in Golden Gate University's AI lab, watching our MoE model process different types of text. Poetry, legal documents, code, casual conversation.

The router kept making these interesting choices. For poetry, it activated experts that seemed to understand rhythm and metaphor. For legal text, completely different experts lit up—ones that apparently learned formal language patterns.

But here's the fascinating part: When the text was ambiguous or crossed domains, the router would activate multiple experts and essentially have them "confer."

Just like my dad calling over his neighbor Bob when he wasn't sure which tool to use.

Just like conscious teams bringing in multiple perspectives for complex decisions.

The AI was developing wisdom, not just intelligence.

Where Most People Screw This Up

I've now watched about a dozen companies try to implement MoE approaches. Most fail for predictably human reasons:

Problem 1: They Don't Respect the Experts You can't just randomly assign specializations and expect it to work. The experts need to develop their expertise naturally, through exposure to the right kind of problems.

Problem 2: They Over-Engineer the Router The coordination has to be simple enough to stay wise. The moment you make it complicated, it starts making stupid decisions.

Problem 3: They Forget About Memory Here's the thing nobody warns you about: You still need enough memory to load ALL the experts, even though you're only using a few at a time. It's like having my dad's entire toolshed in your truck, even when you only need the hammer.

The Case That Changed My Mind

Last fall, a regional bank came to me. They were drowning in compliance across different states. Each state had different rules, different reporting requirements, different everything.

Their existing AI system? Terrible at all of it. It would average out the requirements and give mediocre advice that satisfied nobody.

We tried a conscious MoE approach:

One expert per state's regulatory environment

Smart routing based on transaction location and type

Human oversight layer for edge cases

The breakthrough wasn't technical. It was philosophical.

Instead of trying to create one system that knew "banking compliance," we created a system that knew when to ask the California expert vs. the Texas expert vs. the New York expert.

Results after three months:

Compliance errors dropped 94%

Processing time cut in half

Regulatory audits went from nightmare to routine

Their compliance team actually started enjoying their work

But here's what really mattered: The system got humble. It learned to say "I need the Louisiana expert for this one" instead of guessing.

What Dad's Toolshed Teaches Us About AI

The more time I spend studying both MoE and human consciousness, the more I see the same patterns:

Specialization without ego: The Phillips head screwdriver doesn't try to be a hammer.

Wise coordination: Someone (or something) needs to know which tool for which job.

Comfortable with not-knowing: When you're not sure, consult another expert.

Emergent organization: The system organizes itself around actual use, not theoretical perfection.

This is why I think MoE represents something bigger than just a technical advancement. It's AI starting to mirror how consciousness actually works.

The Three Things That Actually Matter

Forget the technical papers for a minute. If you're thinking about MoE—whether for your organization or just trying to understand where AI is heading—focus on these:

1. Specialization Serves Everyone Better Stop trying to build systems that do everything poorly. Build systems where each part does its thing excellently.

2. Coordination Requires Wisdom, Not Just Rules The router/coordinator role is the most important part. This is where human insight still matters enormously.

3. Humble AI Is Better AI Systems that know their limits and ask for help are infinitely more valuable than systems that confidently give wrong answers.

What's Coming Next

The research is moving in three directions that make me optimistic:

Multi-modal experts - Specialists that work across text, images, audio, but maintain their specialization. Early tests show 40% better results on complex tasks.

Federated expert networks - Different organizations sharing their specialized AI experts. Think LinkedIn for AI models.

Conscious routing - Routers that consider not just accuracy but impact, fairness, and human values in their decisions.

But honestly? The technical stuff isn't what excites me most.

What excites me is watching AI systems develop something that looks a lot like wisdom. Learning when to be confident, when to be uncertain, when to ask for help.

Just like my dad in his toolshed.

The Bigger Picture

Twenty-five years of building systems taught me that technology is just a mirror. It reflects back the consciousness (or unconsciousness) of the people who build it.

MoE works because it mirrors how conscious intelligence actually operates: specialized knowledge, wise coordination, humble uncertainty.

The organizations that figure this out won't just have better AI. They'll have more conscious technology that serves everyone better.

And maybe, just maybe, that's the point.

P.S. - Papa would have loved watching these AI experts learn to specialize. He always said the mark of a good mechanic isn't having the fanciest tools—it's knowing exactly which jugaad to use when.

Sources I Actually Used:

Multiple conversations with clients implementing MoE approaches

My ongoing GenAI research at Golden Gate University (studying this stuff, not claiming I have access to fancy labs)

That IBM technical overview that actually made sense

The Hugging Face blog post that explains Mixtral without the academic BS

Several late-night conversations with AI researchers who admit they don't always know why this stuff works

If you want to explore conscious AI approaches in your organization, or you just want to argue about whether AI can actually develop wisdom, drop me a line. This stuff is too important to figure out alone.