The Emperor's New Algorithms: Why 99.3% of "Explainable" AI Has Never Met a Human

MIT Study Reveals Only 0.7% of Explainable AI Papers Actually Test on Humans

Day 42 of #100WorkDays100Articles

From corporate architect to consciousness advocate: documenting the journey toward AI that serves humans, not spreadsheets

Here's something that'll wake you up faster than your third espresso: researchers at MIT Lincoln Laboratory just reviewed 18,254 academic papers about "explainable AI" and found that only 126 — that's 0.7% — actually bothered to test whether humans could understand the explanations.

Let that sink in.

We've got thousands of researchers publishing papers about making AI understandable to humans. And 99.3% of them never asked a single human if they understood anything.

It's like designing a wheelchair ramp without ever meeting someone who uses a wheelchair. Then calling yourself an accessibility expert.

The Trust Exercise Nobody's Doing

Ashley Suh and her team at MIT didn't set out to expose an industry-wide delusion. They started with a simple question: "Of all the papers claiming to make AI explainable to humans, how many actually prove it?"

They expected maybe 40% would include human validation. Maybe 30% if they were being pessimistic.

The actual number? Less than one percent.

"We had no idea it would be 0.7%," Suh admits in the research.

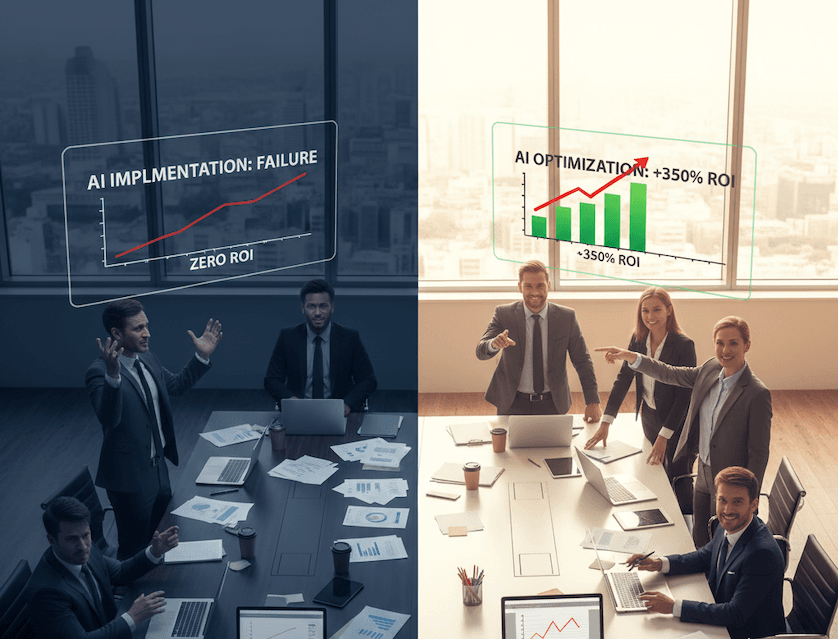

Think about what this means for your enterprise AI strategy.

Every vendor pitching you their "transparent" or "interpretable" or "trustworthy" AI system? There's a 99.3% chance they've never tested whether anyone outside their engineering team can actually understand how it works.

The Signal That Never Gets Received

Here's where it gets interesting — and a bit philosophical.

The MIT researchers frame explainability as a communication problem: you send a signal (the AI's explanation), but that doesn't mean the signal was received or understood.

"In order for something to be considered 'interpretable' or 'explainable,'" the case study notes, "not only must it be shown that a signal was sent, but it must also be shown that the signal was received and understood sufficiently for the given task."

This isn't just academic hair-splitting. This is the difference between AI that empowers human decision-making and AI that creates an illusion of transparency while making humans passive observers of their own obsolescence.

What the Field Is Actually Measuring

So if researchers aren't testing explainability with humans, what are they doing?

They're checking boxes. Using "loosely defined criteria" that signal they're engaged in explainability work:

Presenting outputs in natural language

Making responses "concise" (whatever that means)

Using visualization techniques

Generating feature importance scores

As MIT researcher Hosea Siu puts it: "These qualities are neither necessary nor sufficient conditions for explainability — the proof is in the evidence that someone has examined an 'AI explanation' and used it in a meaningful way."

Reading this, I'm reminded of every corporate AI initiative I witnessed in my 25 years. The endless PowerPoints about "transparency" and "governance." The confidence with which we'd present dashboards nobody understood. The metrics that measured everything except whether anyone could actually use the damn system.

We weren't lying. We were just measuring the wrong things.

The Disability Studies Wake-Up Call

When the team presented their findings at the Association of Computing Machinery's conference, they got confirmation that this problem extends far beyond AI.

The story that stuck with me: researchers in disability studies shared that developers often "simulate" disability by having blindfolded sighted people test tools meant for blind users.

Read that again.

Instead of involving the actual humans they're designing for, they're creating elaborate proxies and calling it validation.

This is what happens when we build systems based on our assumptions about users rather than their lived reality. It's what happens when efficiency trumps empathy. When we convince ourselves that our mental models are good enough.

They're not.

What This Means for Your Enterprise

If you're a CXO reading this, here's your uncomfortable reality check:

That AI system your team is deploying? The one with the "explainable" tag that justified the budget approval? There's a 99% chance nobody's actually proven humans can understand its explanations.

Your compliance team thinks you've got transparency covered because the vendor showed them feature attribution scores and SHAP values. Your executives think they understand the system because the UI uses plain English.

But has anyone actually tested whether the people who'll rely on these explanations can use them to make better decisions?

Has anyone measured whether the "explainability" features build genuine understanding or just create a false sense of security?

Most likely: no.

The CONSCIOUSNESS Audit Question You Need to Ask

This is where the CONSCIOUS AI framework becomes practical. Not theoretical. Not aspirational. Necessary.

When vendors pitch you explainable AI, ask them this:

"Show me the human validation study."

Not the technical specs. Not the architectural diagrams. Not the list of explainability features they've built.

The actual research showing that real humans — people who match your user profile — could understand the explanations and use them effectively.

If they can't produce it, you're buying theater.

Expensive, sophisticated, peer-reviewed theater. But theater nonetheless.

The Cybersecurity Case That Proves the Point

Suh's team has another paper that brings this home. They tried implementing explainable AI techniques (SHAP and LIME — industry standards, mind you) for cybersecurity analysts doing source code classification.

The result?

State-of-the-art explainability methods were "lost in translation when interpreted by people with little AI expertise, despite these techniques being marketed for non-technical users."

The explanations were too localized. Too post-hoc. Too disconnected from the actual real-time workflow analysts needed.

The AI could explain itself. Humans just couldn't use those explanations for anything meaningful.

This is what happens when we build for AI systems instead of human systems that happen to include AI.

The Way Forward Isn't More Features

Here's what I learned in my corporate years that applies perfectly here:

Adding more explainability features won't fix this. Building more sophisticated visualization tools won't solve it. Creating better natural language summaries won't close the gap.

The problem isn't technical sophistication. It's human connection.

You can't design for humans without involving humans. Full stop.

"When you design something that's meant to be interpreted, understood, and trusted by a real person," Siu says, "you ought to test whether it'll work as you intend with that person."

This seems blindingly obvious. Yet 99.3% of the field isn't doing it.

What Conscious AI Implementation Looks Like

Here's what changes when you take this seriously:

Before deployment:

Run actual user studies with real stakeholders

Test explanations in context, not in lab conditions

Measure understanding, not just satisfaction

Iterate based on human feedback, not engineering assumptions

During selection:

Demand evidence of human validation

Ask about the profile of users in their studies

Verify their test conditions match your use case

Walk away if they can't produce credible data

After implementation:

Monitor whether explanations change behavior

Track whether users trust the system more or less over time

Measure decision quality, not just decision speed

Stay humble about what you don't know

This isn't complicated. It's just honest.

The Middle-Class Indian Kid's Perspective

Growing up middle-class in India, I learned early that impressive credentials don't always translate to actual competence. That the person with the fanciest degree might not be the one who actually solves your problem. That surface sophistication often masks fundamental gaps.

This research proves that lesson applies to AI just as much as it did to the various "experts" my parents consulted over the years.

18,000+ papers. Thousands of researchers. Millions in funding. Countless conferences and citations.

And 99.3% of it never bothered to check if any human could actually use what they built.

That's not a knowledge gap. That's a values gap.

The Question That Changes Everything

So here's where I'll leave you:

If your AI can explain itself but humans can't use those explanations, is it actually explainable?

Or is it just performing explainability for an audience of other AI systems and academic reviewers?

Because I'll tell you what I've learned in my journey from corporate IT to conscious AI advocacy:

Real transparency isn't about what your system can produce. It's about what humans can understand. Real trust isn't built through technical features. It's earned through evidence that those features actually work for real people in real contexts.

And real consciousness in AI implementation means having the humility to test your assumptions against human reality — not just once, but continuously.

The MIT researchers are calling for "increased emphasis on human evaluations in XAI studies."

I'm calling for something bigger: a fundamental shift from AI-centered to human-centered validation.

Not because it's trendy. Not because it's what gets published. Because it's the only thing that actually serves the humans these systems are supposedly built for.

#100WorkDays100Articles #ConsciousAI #AIGovernance #XAI #TheSoulTech #HumanCenteredAI

References

MIT Lincoln Laboratory. (2026). "Study finds that explainable AI often isn't tested on humans."

Suh, A., Siu, H., Smith, N., & Hurley, I. (2025). "'Explainable' AI Has Some Explaining to Do." MIT SERC Case Studies.

Suh, A., et al. (2025). "Fewer Than 1% of Explainable AI Papers Validate Explainability with Humans." arXiv:2503.16507.

Suh, A., et al. (2024). "More Questions than Answers? Lessons from Integrating Explainable AI into a Cyber-AI Tool." arXiv:2408.04746.

Abhinav Girotra is a conscious AI evangelist and doctoral candidate at Golden Gate University. After 25 years in Fortune 500 corporate IT, he now advocates for AI systems that enhance rather than replace human agency. Connect with him on LinkedIn or subscribe to TheSoulTech.com for insights on conscious AI implementation.