The Anthropic Flip: What Your Board Needs to Know About AI's Privacy Reckoning

Why Conscious Business Models Beat Privacy Pivots Every Time

Day 25 of #100WorkDays100Articles

The Email That Changed Everything

Tuesday morning, green tea still warm. My Claude interface pops up: "We're now giving users the choice to allow their data to be used to improve Claude."

Wait. What?

Anthropic—the company that built its reputation on not training on user data—just flipped the script. Previously, Anthropic didn't use consumer chat data for model training. Now, the company wants to train its AI systems on user conversations and coding sessions, and it said it's extending data retention to five years for those who don't opt out.

This isn't another buried privacy update. This is the moment AI companies stopped pretending they could build conscious technology without conscious business models.

What 25 Years in IT Taught Me About This Moment

I've seen enough enterprise pivots to smell desperation. But this? This smells different.

McKinsey's latest research shows that CEO oversight of AI governance is one element most correlated with higher self-reported bottom-line impact from an organization's gen AI use. Meanwhile, more than 70% of consumers hesitate to use AI products if their privacy feels compromised.

The math is brutal: Build trust or die.

The Sacred Pause Strategy

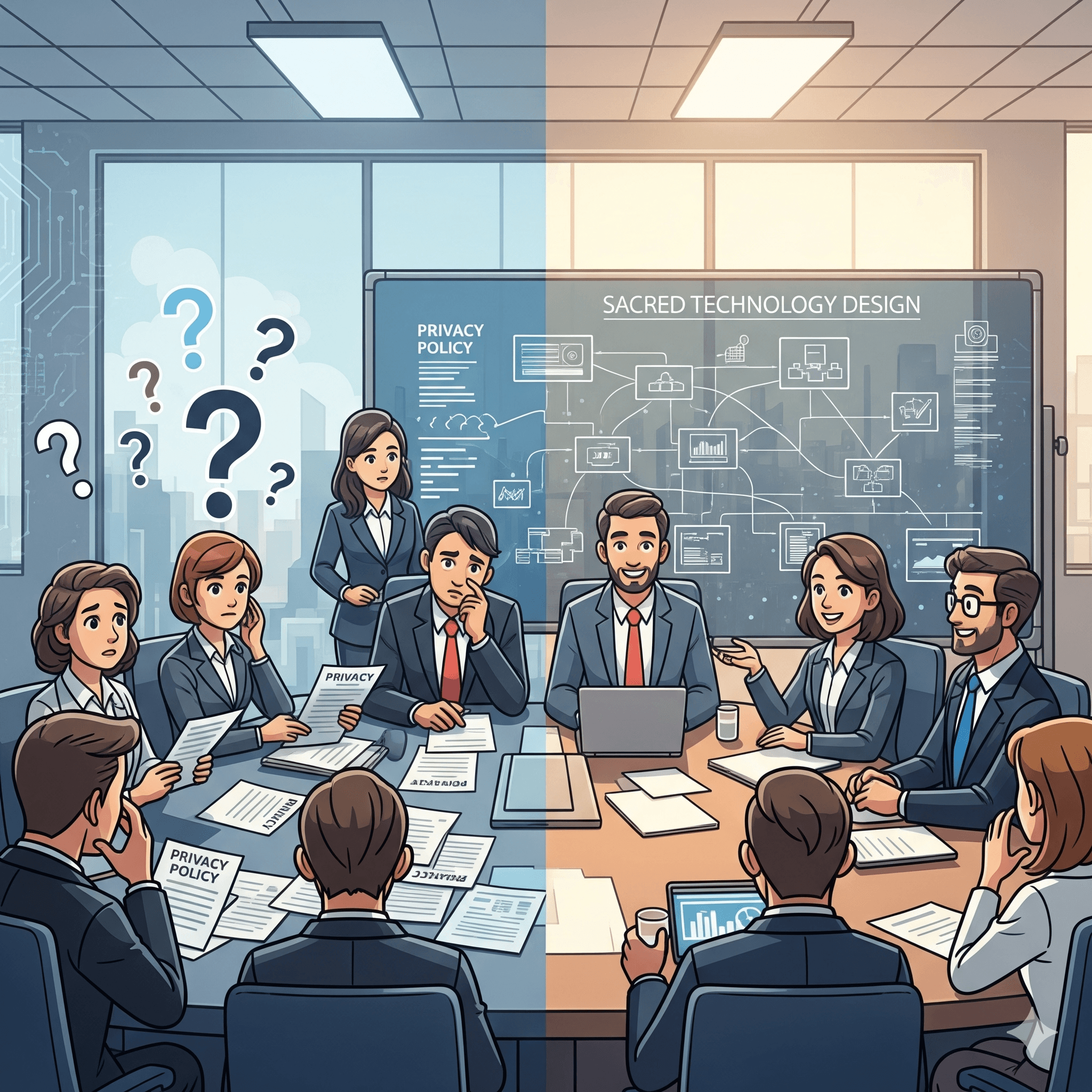

Here's what Anthropic did right (and what your lawyers probably missed):

They gave users until September 28, 2025 to decide. Not immediately. Not buried in an update. A conscious pause.

They made the value exchange transparent. "Help us improve model safety" instead of corporate doublespeak.

They kept it reversible. You can change your mind anytime.

This is what I call Sacred Technology Design—building systems that respect human agency instead of exploiting human laziness.

Your Three Questions for Monday's Board Meeting

Do we know what data our AI vendors are collecting? (Spoiler: You probably don't.)

If our AI systems had consciousness, would they be proud of how they serve our customers? (This question will either get you fired or promoted—no middle ground.)

Are we building trust or just managing compliance? (One creates competitive advantage. The other creates legal bills.)

The Consciousness Competitive Advantage

The conversation around AI governance has moved beyond theoretical frameworks to tangible global action—and most enterprises are still playing catch-up.

The companies that will win the next decade aren't the ones with the best AI. They're the ones with the most conscious AI.

That means designing systems that strengthen relationships instead of extracting data. Building value exchanges that users actually understand. Creating technology that makes people more human, not less.

What I'm Watching Next

Anthropic's move signals something bigger: Agentic AI, the newest groundbreaking AI application capable of automating discrete tasks and workflows, was announced at CES 2025 to be a multitrillion-dollar industry.

When AI systems can act autonomously, the consciousness question becomes existential. Not philosophical—existential. As in, "Will our business exist if we get this wrong?"

The Bottom Line

Every AI privacy crisis is really a consciousness crisis. Every data breach is really a values breach.

Anthropic just showed us what conscious business transformation looks like: messy, transparent, and genuinely respectful of human choice.

Your move.

Tomorrow: Why GitHub Spark's no-code revolution actually demands more consciousness, not less.

LinkedIn: https://www.linkedin.com/pulse/ai-privacy-reckoning-what-anthropics-flip-means-your-strategy-denwc

Research Notes:

Anthropic Consumer Terms Update, August 28, 2025

McKinsey Global AI Survey 2025

Industry privacy trust statistics