The 100-Meter Wire That Taught Me Everything About AI

Or: What Diwali Lights Reveal About Patience, Space, and Building Anything Worth a Damn

I was halfway through untangling 100 meters of Diwali lights when it hit me.

This is exactly what's wrong with how we're building AI systems.

Let me back up.

Sunday morning. Balcony. One massive ball of tangled LED wire that looked like it had been through a washing machine. Maybe two washing machines. The kind of knot that makes you want to just buy new lights and pretend this never happened.

But here's the thing about Diwali lights (and life, and AI, and pretty much everything): shortcuts don't work. You can't force it. You can't rush it. And you definitely can't pretend the knots aren't there.

I grabbed coffee. Spread the wire across the floor. Started from one end.

Pull gently. Find where it crosses. Loosen. Breathe. Repeat.

Three hours later, I had perfect lights strung across my balcony.

Three hours of doing the most boring thing imaginable.

And I learned more about conscious AI implementation in those three hours than in most corporate strategy sessions I've sat through.

The Knots We Don't Want to See

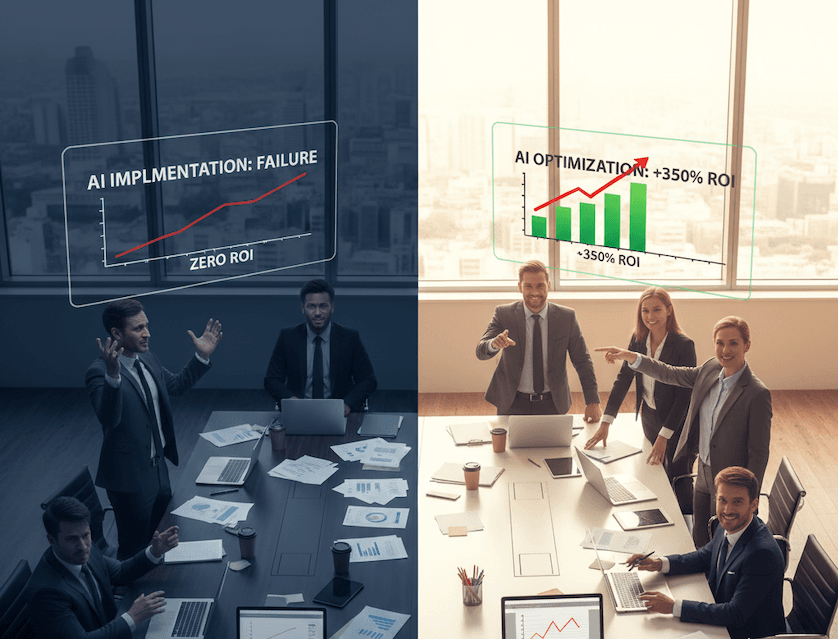

Here's what I watched companies do with AI last week:

CEO says: "We need AI everywhere by Q3."

Team scrambles. Buys the shiniest tools. Forces adoption. Creates "mandatory usage" policies (looking at you, Yahoo Japan).

Six months later: tangled mess that nobody wants to touch.

Sound familiar?

I've been implementing technology in enterprises for 25 years. Same pattern every time: rush the deployment, skip the basics, create bigger problems than you started with.

The Diwali lights don't lie. You can see every mistake immediately. That knot you ignored on the left side? It's now blocking the entire right side. That section you tried to force through? Now you've created three new tangles.

AI is the same. Except the knots are invisible.

They show up as:

Teams avoiding the tools you forced on them

AI making decisions nobody understands

Productivity gains that somehow decrease morale

Technology that's technically working but spiritually dead

(That last one sounds dramatic, but watch someone interact with an AI system they were forced to use. You'll see what I mean.)

What Patient Untangling Actually Looks Like

The lights taught me something I keep forgetting in my GenAI doctorate research: basics aren't basic—they're foundational.

When I was untangling, I had to:

Give it space. Cramming 100 meters into a small corner made everything worse. I spread the wire across my entire balcony. Suddenly I could see what I was working with.

Translation for AI: Stop deploying in isolated pockets. Stop treating it like another software rollout. Give people room to experiment, fail, learn, and actually understand what they're using.

Remove pressure. The moment I got frustrated and pulled hard, I created new knots. Every. Single. Time.

Translation for AI: Mandated adoption creates resistance. Forcing "AI-first" policies without building consciousness creates unconscious usage. You know what's worse than not using AI? Using it unconsciously and pretending you're innovating.

Do the boring work. There's no hack for untangling 100 meters of wire. You can't skip to the end. You can't outsource it. You have to go slow, section by section, knot by knot.

Translation for AI: The CONSCIOUSNESS audit isn't sexy. Stakeholder mapping isn't exciting. Building wisdom protocols feels like overkill. Until you're six months in and realize you built something nobody trusts.

Trust the process. Around hour two, I thought: "This is taking forever. Maybe I should just cut the wire and connect the pieces." That would've worked. For about three days. Then I'd have random dark sections and fire hazards.

Translation for AI: Quick implementations create technical debt. Rushing consciousness work creates spiritual debt. One breaks your system. The other breaks your people.

The Part Nobody Tells You

Here's what surprised me about untangling those lights:

The knots weren't random.

Every tangle had a story. This one was from cramming the lights into a box too fast last year. This one from pulling hard instead of patient. This one from not understanding the pattern.

Your AI implementation tangles have stories too:

That's from deploying before understanding user needs

That's from copying competitors without strategy

That's from treating technology as solution rather than tool

That's from forgetting humans aren't just "end users"

(Seriously, when did we start calling people "end users"? Even the language shows how unconscious we've become.)

I've seen $2B companies spend six months building AI chatbots that can't handle basic questions. Not because the technology failed. Because nobody did the boring work of mapping actual user needs, understanding workflows, building trust.

They tried to skip the untangling.

The knots are still there. Just more expensive.

What This Looks Like in Practice

Last Tuesday I was working with a CXO who said: "Our AI strategy is failing and we don't know why."

I asked: "Did you give your teams space to experiment?" "No, we set clear KPIs."

"Did you remove pressure to adopt immediately?"

"No, we made it mandatory."

"Did you do the boring stakeholder mapping?" "No, that would've delayed launch."

"Did you trust your people to find the right implementation pace?" "No, we hired consultants to accelerate adoption."

They didn't fail at AI. They failed at untangling.

Here's what conscious implementation looks like instead:

Week 1: Give teams AI tools with zero pressure. Just explore. Week 2-4: Collect stories. Where did it help? Where did it confuse? Where did people feel empowered versus diminished? Month 2: Map those stories to actual workflows. Find the natural fit. Month 3: Build protocols based on what people actually need, not what vendors sell. **Month 4+:**Scale what works. Iterate what doesn't. Remain conscious.

Boring? Yes. Slow? Compared to forced adoption? Not really. Effective? Ask me in six months instead of two weeks.

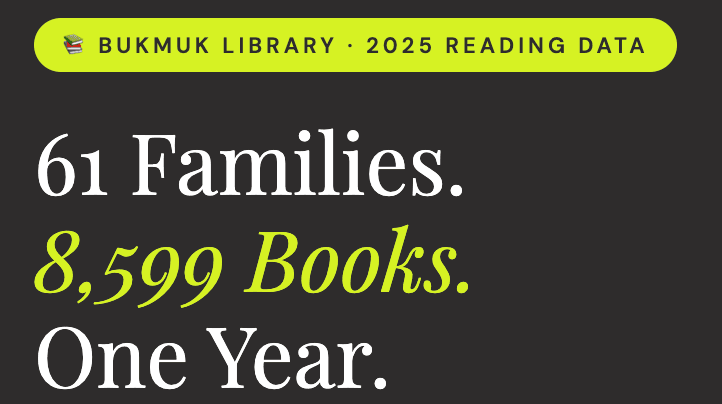

The Pattern I Keep Seeing

In my GenAI research, I'm discovering something that contradicts most AI implementation playbooks:

Speed of adoption inversely correlates with depth of integration.

The faster you force AI on people, the more superficial the usage becomes.

It's like yanking on tangled lights. You might make some progress initially, but you're creating damage you can't see yet.

The companies doing this well?

They're the ones willing to look slow. The ones doing stakeholder consciousness audits before deployment. The ones treating AI integration like untangling lights instead of flipping switches.

(Plot twist: they end up faster in the long run because they don't spend the next year fixing what broke during forced adoption.)

What This Actually Means for You

Look, I'm not saying AI adoption should take years.

I'm saying: Patient doesn't mean slow. It means conscious.

When I untangled those lights, three hours felt long. But you know what would've been slower? Cutting and reconnecting. Buying new lights every Diwali. Creating fire hazards that burn down the building.

(That escalated quickly. But you get the point.)

Your AI implementation probably has knots:

Teams using tools unconsciously

Decisions being automated that should be human

Productivity gains that feel spiritually empty

Technology making you more efficient at things that don't matter

Here's the uncomfortable truth: You can't skip the untangling.

You can:

Give it space (physical, temporal, psychological)

Remove pressure (trust, not mandates)

Do boring basics (consciousness audits, stakeholder mapping, wisdom protocols)

Trust the process (measure integration quality, not just adoption speed)

Or you can keep yanking on the wire and wonder why everything keeps breaking.

The Questions Nobody's Asking

After 25 years of tech implementations and watching the AI rush happening right now, here's what keeps me up:

Are we building AI systems we can understand? Or just AI systems that work (until they don't)?

Are we creating technology that serves consciousness? Or technology that bypasses it?

Are we patient enough to untangle the knots? Or are we just creating faster knots?

My Diwali lights are perfect now. But only because I was willing to spend three hours on my balcony, one knot at a time, doing work that looked slow but was actually the only way forward.

Your AI implementation might need the same kind of patience.

The kind that looks inefficient to everyone watching. The kind that feels tedious in the moment.

The kind that creates something sustainable instead of something shiny.

The kind that actually works when you turn on the lights.

Day # 37 of #100WorkDays100Articles. Currently pursuing my GenAI doctorate while untangling corporate AI implementations one conscious decision at a time.

Hit reply if this resonated. I read every response.

P.S. The lights look beautiful now. Worth every minute of patient work. Your AI implementation could feel the same way—if you're willing to do the untangling nobody wants to talk about.